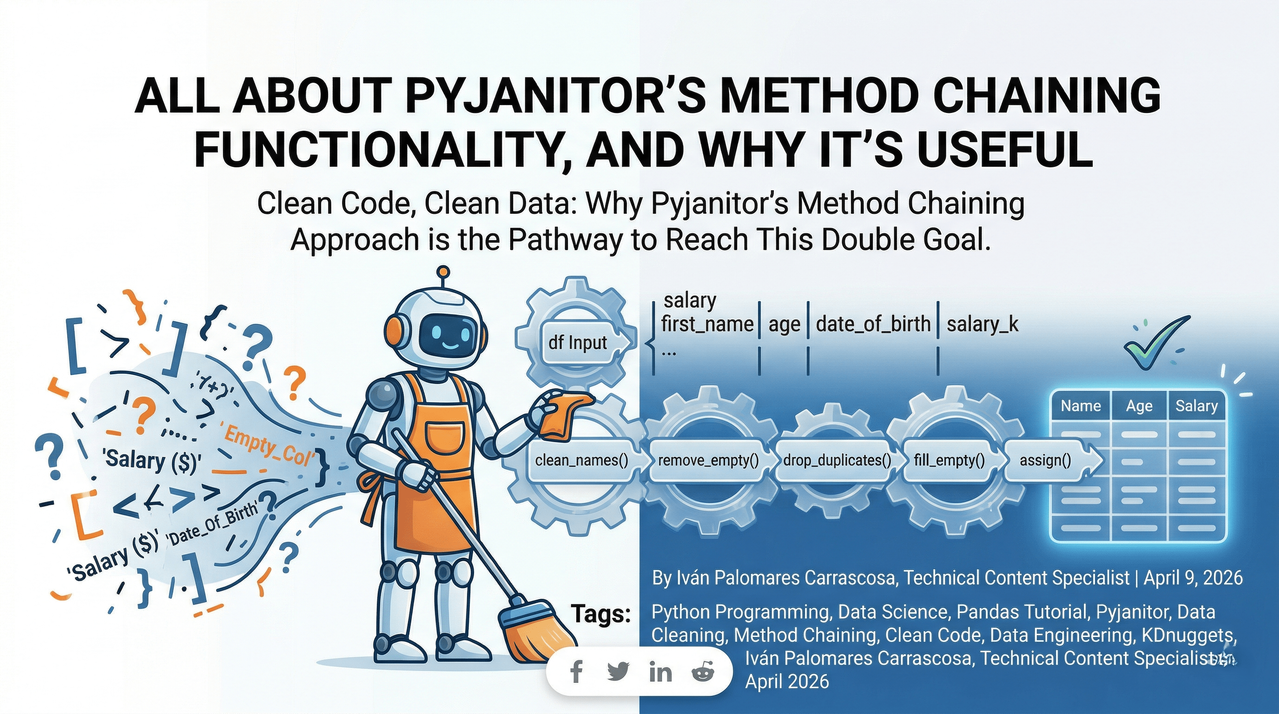

Clean Code, Clean Data: Mastering Pyjanitor’s Method Chaining for Efficient Data Science

In the rapidly evolving landscape of data engineering and data science, there is an unspoken truth that every practitioner eventually faces: the majority of your time isn't spent building sophisticated machine learning models or designing neural networks. Instead, it is spent in the trenches of data preprocessing. We often refer to this as the "80/20 rule" of data science—80% of the work is cleaning data, and only 20% is actual analysis.

Working intensively with data in Python teaches a vital lesson: data cleaning usually doesn't feel like high-level science; it feels like acting as a digital janitor. You load a dataset, discover messy column names, encounter inconsistent missing values, and frequently end up with a "variable soup"—a dozen temporary dataframes like df1, df_filtered, and df_final_v2—only the last of which is actually useful.

This is where Pyjanitor enters the frame. By extending the capabilities of the ubiquitous Pandas library, Pyjanitor introduces a "method chaining" approach that transforms arduous data cleaning into elegant, readable, and highly maintainable pipelines.

The Philosophy of Method Chaining

To understand why Pyjanitor is such a game-changer, we must first understand the design pattern it champions: Method Chaining.

Method chaining (also known as a Fluent Interface) is a programming pattern where multiple methods are called sequentially on the same object in a single statement. In traditional Python scripting, we often perform operations step-by-step, reassigning the variable at every turn. While functional, this approach is prone to "state" bugs and makes the code harder to read as the number of operations grows.

A Simple Conceptual Example

Consider a basic string manipulation task. In standard procedural Python, you might write:

With method chaining, the logic becomes a single, unified flow:

The logic flows from left to right. It mimics human thought: "Take the text, then strip it, then lower it, then replace the word." When we apply this logic to DataFrames, the impact on code quality is exponential.

The Limitations of Native Pandas

Pandas is an incredibly powerful tool, but its syntax for data cleaning can sometimes be fragmented. For instance, if you want to drop a column and then rename another, you might have to alternate between methods that return a new DataFrame and those that operate "in-place."

A standard data cleaning workflow in Pandas often looks like this:

While this works, it is visually cluttered. As projects grow, these individual assignments make it difficult to track the "lineage" of the data. Furthermore, Pandas lacks specific, verb-based methods for common "dirty work" tasks, such as cleaning column names that contain special characters or extra whitespace.

Introducing Pyjanitor: The Data Science Swiss Army Knife

Pyjanitor is an open-source library that provides a suite of data-cleaning methods that "plug into" Pandas. It was inspired by the janitor package in R and was designed specifically to facilitate method chaining.

By using Pyjanitor, you can encapsulate your entire cleaning logic within a single set of parentheses. This creates a clear "pipeline" that is self-documenting.

Key Pyjanitor Advantages:

- Expressive API: Methods like

clean_names(),remove_empty(), andfill_empty()describe exactly what they do. - Immutability-Friendly: Every method returns a new DataFrame, making it easy to chain operations without worrying about side effects on the original object.

- Cloud & Notebook Ready: It works seamlessly in environments like Google Colab, Jupyter, and professional production pipelines.

Practical Application: From Messy to Masterpiece

Let's look at a technical implementation. We will start with a synthetic dataset that incorporates common real-world issues: trailing spaces in column names, missing values, duplicate entries, and unnecessary columns.

Step 1: The Raw Data

Before we clean, we must acknowledge the mess.

| First Name | _Last_Name | Age | Date_Of_Birth | Salary ($) | Empty_Col |

| Alice | Smith | 25.0 | 1998-01-01 | 50000 | NaN |

| Bob | Jones | NaN | 1995-05-05 | 60000 | NaN |

| Charlie | Brown | 30.0 | 1993-08-08 | 70000 | NaN |

| Alice | Smith | 25.0 | 1998-01-01 | 50000 | NaN |

Step 2: The Pyjanitor Pipeline

To clean this data efficiently, we use a single chain of operations. Note how the use of parentheses allows us to put each operation on a new line, drastically improving readability.

Deep Dive into the Cleaning Steps

1. The clean_names() Magic

This is arguably the most used function in the library. It takes messy columns like " First Name " or "Salary ($)" and converts them into a standardized format (usually lowercase with underscores). This prevents the common "KeyError" that occurs when you forget a space at the end of a column name.

2. Handling Missing Data with fill_empty()

Unlike the standard fillna(), fill_empty() in Pyjanitor is designed to be more explicit within a chain. It allows you to target specific columns and provide values (like a median or a constant) without breaking the flow of the code.

3. Pipeline Readability and Debugging

One might wonder: "If everything is in one big chain, how do I debug it?" Because each step is a discrete line, you can simply comment out a line to see how the data looks without that specific transformation. This modularity is a significant advantage over bulk procedural code.

Why This Matters for Modern SEO and Technical Documentation

From a technical communication perspective, using Pyjanitor and method chaining is about more than just code execution—it’s about communication.

When we write reports or articles for other developers, our goal is to reduce the "cognitive load" of the reader. A method chain acts as a narrative for the data’s journey. Anyone—from a junior developer to a senior architect—can look at a Pyjanitor chain and immediately understand the business logic applied to the dataset.

Furthermore, as search engines increasingly prioritize high-quality, structured technical content, documenting your data pipelines using readable patterns like this ensures that your work remains accessible and useful to the wider community.

Conclusion: A Pathway to Cleanliness

The transition from "Digital Janitor" to "Data Scientist" is marked by the tools we choose to manage complexity. Pyjanitor’s method chaining functionality is not just a syntactic convenience; it is a philosophy of Clean Code applied to Clean Data.

By adopting this approach, you:

- Reduce Errors: No more intermediate variables.

- Increase Speed: Rapidly iterate on data cleaning logic.

- Improve Collaboration: Write code that documents itself.

Whether you are working on a small local project or a massive FinTech data pipeline, incorporating Pyjanitor into your Python toolkit will streamline your workflow and allow you to spend less time cleaning and more time uncovering insights.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment