The AI Illusion: Why Artificial Intelligence Won't Kill Open Source Security

The American tech landscape is currently gripped by a controversial and provocative narrative: the idea that artificial intelligence is the nail in the coffin for open-source software security. This debate was recently thrown into the spotlight when Cal.com’s co-founder and CEO, Bailey Pumfleet, boldly declared that "open source is dead." However, a closer analysis of the modern cybersecurity ecosystem, backed by insights from veteran developers and Silicon Valley strategists, suggests that this sensationalist claim is fundamentally flawed.

The catalyst for this industry-wide conversation was Cal.com’s recent decision to migrate its core scheduling program from the highly transparent GNU Affero General Public License (AGPL) to a closed, proprietary license. The company’s stated rationale rests heavily on the perceived threat of modern generative AI. The core argument is that AI empowers malicious actors to exploit code transparency at an unprecedented scale. As the company’s leadership argued, publishing open-source code today is akin to handing out the architectural blueprints of a bank vault. With AI acting as an accelerator, there are allegedly a hundred times more threat actors scrutinizing those blueprints for vulnerabilities.

While this analogy sounds alarming, especially to US enterprise leaders heavily invested in software supply chain security, it is ultimately a regression to an ancient and heavily debunked technological philosophy.

The Nostalgic Trap of "Security by Obscurity"

The argument that allowing public access to source code automatically degrades its security is not new. In fact, it is a direct echo of the "security by obscurity" debates that raged throughout the 1990s between proprietary software giants and the emerging Linux community. It was mathematically and practically untrue then, and it remains untrue in 2026.

Consider the reality of the modern American digital infrastructure: nearly all commercial, enterprise-grade software relies extensively on open-source foundations. Over the past three decades, the open-source model has consistently proven to be far more resilient and secure than closed-source alternatives. Hiding code behind a proprietary wall does not eliminate vulnerabilities; it merely limits the number of benevolent experts who can find and patch them before they are exploited.

It is undeniable, however, that AI has drastically altered the speed of vulnerability discovery. Automated tools have made finding security loopholes faster and more accessible than ever before. Across the US tech sector, there is palpable anxiety that next-generation models, such as the Anthropic Mythos Preview, will empower script kiddies to generate a tidal wave of automated bug reports, potentially overwhelming the volunteer maintainers of smaller, critical open-source repositories.

The Token Economics of Modern Cybersecurity

This anxiety is not entirely without statistical backing. Industry reports highlight a growing challenge. According to Black Duck's 2026 Open Source Security and Risk Analysis (OSSRA) paper, there has been a staggering 107 percent surge in open-source vulnerabilities per codebase. Jason Schmitt, CEO of Black Duck, accurately summarizes the dilemma: "The pace at which software is created now exceeds the pace at which most organizations can secure it."

Yet, this equation works in both directions. The same artificial intelligence that accelerates the discovery of exploits also accelerates the development of patches. The defensive application of AI allows the community to remediate newly discovered security holes almost simultaneously with their discovery. Cal.com simply decided it did not want to participate in this high-speed, AI-driven arms race, perhaps lacking the financial or operational bandwidth to keep up.

This dynamic introduces a fascinating new paradigm in software engineering, perfectly articulated by US tech strategist Drew Breunig. In a recent market analysis, Breunig reduced the modern cybersecurity landscape to a brutally simple economic equation: to harden a system today, a company must spend more compute tokens discovering and patching exploits than malicious actors will spend trying to exploit them.

This is essentially a modern, AI-era update to "Linus’s Law." Instead of the traditional axiom—"given enough eyeballs, all bugs are shallow"—the 2026 reality is that "given enough tokens, all bugs are shallow." The survival of any software project now presumes the financial capability to afford enough tokens to outpace attackers.

This is precisely where the open-source model demonstrates its inherent superiority. Simon Willison, co-creator of the Django framework, posits a compelling counter-argument to the proprietary retreat. He notes that because security auditing is now inextricably tied to token expenditure, open source actually becomes more valuable. Open-source libraries allow the global developer community to pool resources and share the massive auditing budget. In contrast, closed-source software companies are forced to shoulder the immense financial burden of finding all exploits privately, spending their own finite token budgets against an internet filled with heavily armed AI threat actors.

Community Backlash and the Proprietary Fig Leaf

Unsurprisingly, the broader developer community has not bought into the narrative that AI necessitates a retreat to closed source. Competitors within the US market are already capitalizing on this strategic pivot. Ryan Sipes, Product & Business Development Manager for Mozilla Thunderbird, publicly leveraged the situation, stating on Y Combinator's forums that their alternative, Thunderbird Appointment, will remain perpetually open-source, inviting developers to build with them and replace Cal.com.

Furthermore, deep-dive analyses on platforms like Reddit and Slashdot have heavily scrutinized the true motives behind the relicensing. Many cybersecurity professionals have pointed out that recent vulnerabilities patched in the company's software were not the result of highly sophisticated, AI-driven zero-day attacks. Instead, they stemmed from fundamental, human-error oversights in basic authentication and access control logic.

As one prominent Slashdot analyst noted, if AI tools are truly so powerful that developers fear they will expose security flaws, the logical response is to use those same tools defensively to harden the product—not to declare security through obscurity. The prevailing sentiment across Silicon Valley is that blaming AI is merely a convenient fig leaf. It provides a modern, buzzword-heavy excuse for an age-old corporate desire: backing out of the open-source community to maximize monetization once a product has achieved market penetration and profitability.

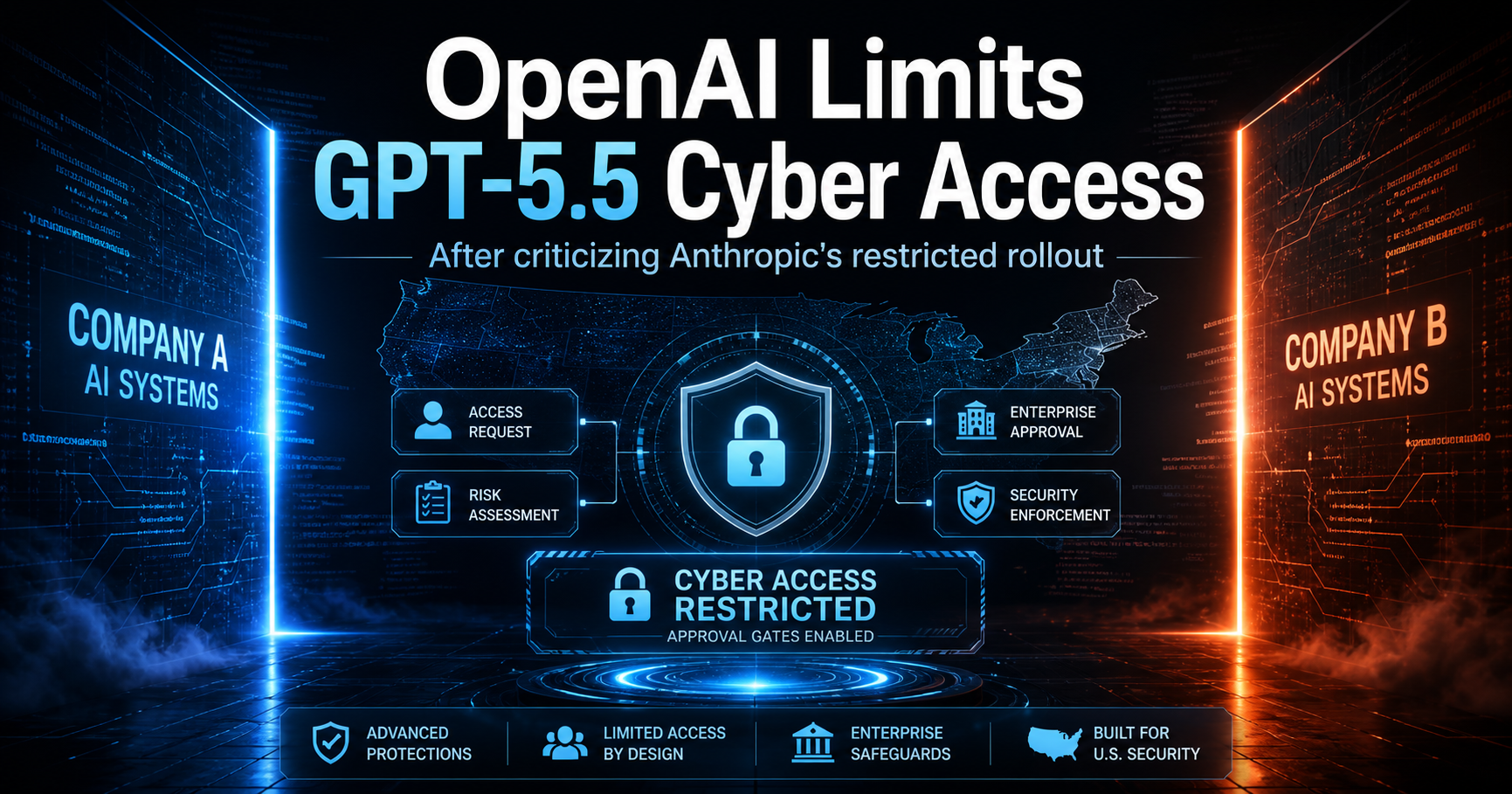

The GPT 5.4-Cyber Equalizer

For those still clinging to the hope that proprietary licenses offer a shield against AI, the rapid evolution of technology is about to deliver a rude awakening. Peter Steinberger, creator of OpenClaw, recently highlighted the capabilities of GPT 5.4-Cyber. OpenAI’s aggressive counter to the Mythos model boasts an unprecedented ability for closed-source reverse engineering. OpenAI claims this model can effectively reverse-engineer compiled binaries directly back into readable source code.

If models like GPT 5.4-Cyber consistently deliver on this promise, the "security by obscurity" argument is dead on arrival. The proprietary walls will become entirely transparent to AI. If malicious actors can easily decompile closed-source applications to hunt for vulnerabilities, then abandoning open-source licenses offers absolutely zero defensive advantage. It merely alienates the very community that could help secure the software.

The Path Forward

There is no denying that generative AI is radically and permanently changing the landscape of open-source programming in the United States and globally. The transformation is so vast that predicting the exact workflows of software engineering by 2027 remains an exercise in speculation.

However, one fundamental truth remains clear: retreating into outdated, discredited proprietary licensing models out of fear is a strategic failure. The future of robust software security lies not in hiding our code from artificial intelligence, but in aggressively learning how to utilize AI and open-source methodologies in tandem. By leaning into community collaboration and shared token economics, the tech industry can build a more resilient digital infrastructure than ever before.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment