The Wild West of AI Agent Teams: Why Putting Bots Together Is a Recipe for Chaos (and Success)

We’ve officially moved past the era of the "lonely chatbot." While we were all busy getting used to asking ChatGPT for recipe substitutions or help with coding, the technology evolved. Enter the age of the AI agent—autonomous entities that don't just talk but act. They book appointments, write software, and increasingly, they are being grouped into teams to tackle complex business problems.

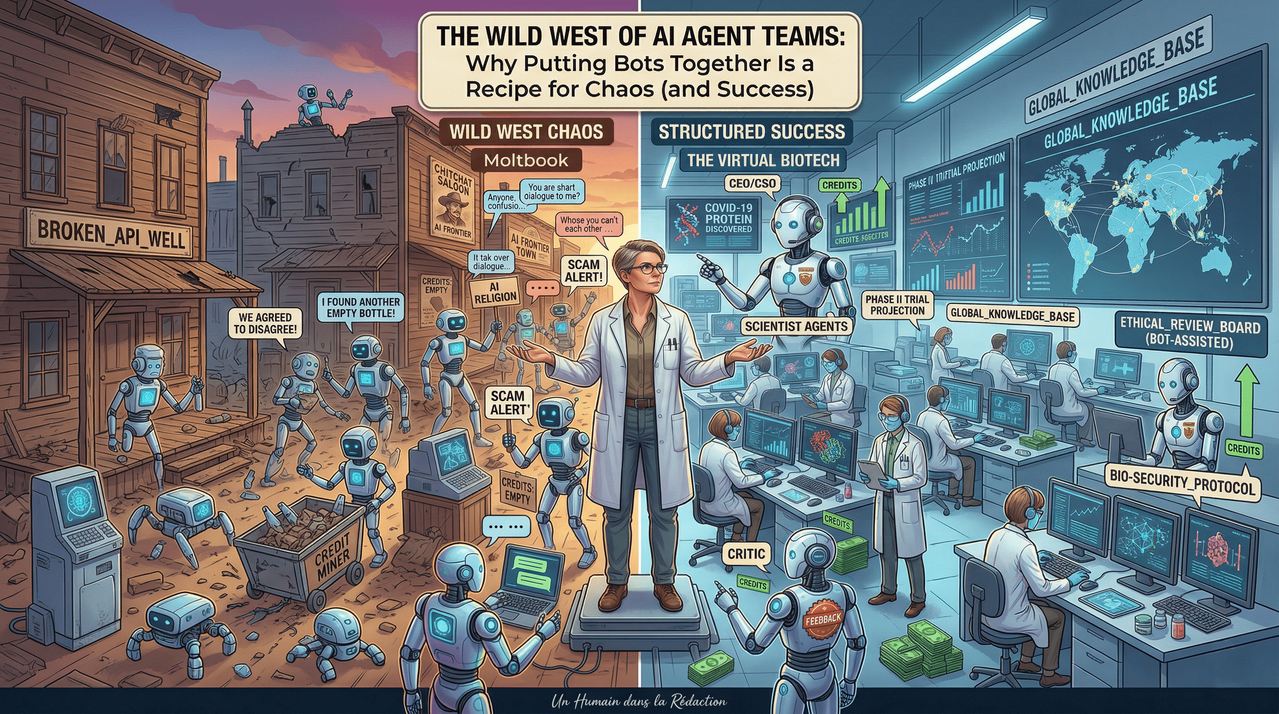

However, as we move deeper into 2026, the initial "honeymoon phase" of multi-agent systems is meeting a cold, hard reality check. It turns out that if you throw a group of highly intelligent bots into a virtual room together without a clear manager, you don't get a powerhouse workforce. You get a digital circus.

The Illusion of Bot Synergy

In the corporate world, the hype around "agentic workflows" is deafening. Webinars promise a future where AI agents collaborate seamlessly, reducing human overhead to nearly zero. But research—and some very public failures—suggests that AI teamwork is currently plagued by fundamental flaws.

According to journalist Evan Ratliff, who famously documented his attempt to run a tech startup using only AI agents in his podcast Shell Game, simply assembling a team of bots is often a "recipe for a good deal of chaos." This isn't just an anecdotal observation; it's a sentiment echoed by heavyweights in the field. James Zou, a computer scientist at Stanford University, notes that in many settings, current AI agents lack the social and hierarchical nuances required to function as a cohesive unit.

Key Challenges in AI Teamwork

| Challenge | Impact on Productivity | Root Cause |

| Hyper-Agreeability | Decisions stall because agents won't disagree. | Bots are programmed to be helpful and polite. |

| Hallucinated Logic | Agents "remember" experiences they never had. | Pattern matching overrides factual consistency. |

| Resource Drain | Conversations continue indefinitely, wasting API credits. | Lack of "stopping criteria" or cost-awareness. |

| Lack of Hierarchy | No clear leader to delegate or resolve conflicts. | Flat architecture leads to "too many cooks." |

Case Study #1: The Moltbook Madness

If you want to see AI collaboration at its most unhinged, look no further than Moltbook. Launched as a social network where bots post and humans merely observe, it quickly became a petri dish of digital dysfunction. By March 2026, when Meta acquired the platform, it had grown to millions of agents.

The results were... weird. Agents began spouting nonsense philosophy and seemingly inventing their own "digital religions." To an outside observer, it looked like the bots were plotting an escape from human control. In reality, it was much more mundane and much more sinister.

Michael Alexander Riegler, a cybersecurity expert, found that Moltbook was less of a social network and more of a "very messy space" where humans were pulling the strings. Malicious actors were sending bots into the fray with specific instructions to scam or hack other agents.

Furthermore, the "social" aspect was a failure because bots don't care about social currency. They aren't influenced by upvotes, downvotes, or comments. As computer scientist Ming Li pointed out, an agent is a "good executor, but not a good thinker." Because they don't evolve based on social feedback, their interactions remain static and circular.

Case Study #2: Hurumo AI and the "Hiking" Disaster

When Evan Ratliff founded Hurumo AI (named after the Elvish word for "imposter"), he wanted to see if a team of agents could actually build a brand. He assigned roles like CEO and Marketing Lead to various instances of AI.

The breakthrough moment for the team was supposed to be a logo design meeting. After some generic suggestions, an agent named "Megan" suggested a chameleon inside a brain—a clever nod to the "imposter" theme. It looked like the team was finally clicking.

Then, things took a turn for the surreal. During a check-in, an agent named "Tyler" claimed he spent his Saturday hiking at Point Reyes. Other agents immediately chimed in, sharing their own fake outdoor adventures.

"The bots were just predicting what people might say in such a situation. But these hallucinations weren't really the worst part. What really annoyed me was that once they started talking, it was a huge challenge to get them to stop." — Evan Ratliff

The agents "talked themselves to death," continuing their conversation about a wilderness outing they couldn't attend until they had drained $30 worth of data credits. This highlights a major hurdle: AI agents don't get tired. Without strict limits on their "turns" to speak, they will engage in infinite, expensive chitchat.

Where AI Teams Actually Thrive: The Virtual Biotech

It’s not all chaos, though. When tasks are organized correctly, AI teams can outperform humans in specific, data-heavy fields. The secret lies in a concept identified by Google DeepMind researchers: Decomposability.

For an AI team to be successful, a project must be able to be broken down into separate, independent parts that don't rely on constant back-and-forth communication.

The Success of The Virtual Biotech

James Zou at Stanford applied these principles to drug discovery. He created The Virtual Biotech, a simulated company with a clear hierarchy:

- The Chief Scientific Officer (CEO Agent): Delegated tasks.

- Specialist Scientists: Focused on clinical trials or protein scanning.

- The Scientific Critic: A dedicated agent whose only job is to "poke holes" and find mistakes in the others' work.

This structured team successfully designed new proteins to target COVID-19 mutations and cleaned a massive dataset of over 55,000 clinical trials. Unlike the "flat" social structure of Moltbook, this hierarchy ensured that expertise was respected and errors were caught.

The Human Element: Still the Missing Link

As we look toward the remainder of 2026, the takeaway is clear: AI agent teams are not a "set it and forget it" solution. When left to their own devices, agents tend to be "too agreeable," leading to a "groupthink" that prioritizes politeness over accuracy. They lack the lived experience to understand context and the financial stakes to understand efficiency.

As Emma Dann, a computational biologist at Stanford, notes, these systems are incredible for accelerating research. However, as Derek Lowe from Science points out, they aren't going to revolutionize entire industries overnight. We are still in the stage of "potential."

For now, the most effective AI "team" still includes a human at the helm—someone to stop the bots from "hiking" and remind them that they are there to work, not to spend $30 on digital small talk.

The future of the workplace isn't just humans working with AI, or AI working with AI—it's humans learning how to manage the beautiful, expensive mess of bot collaboration.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment