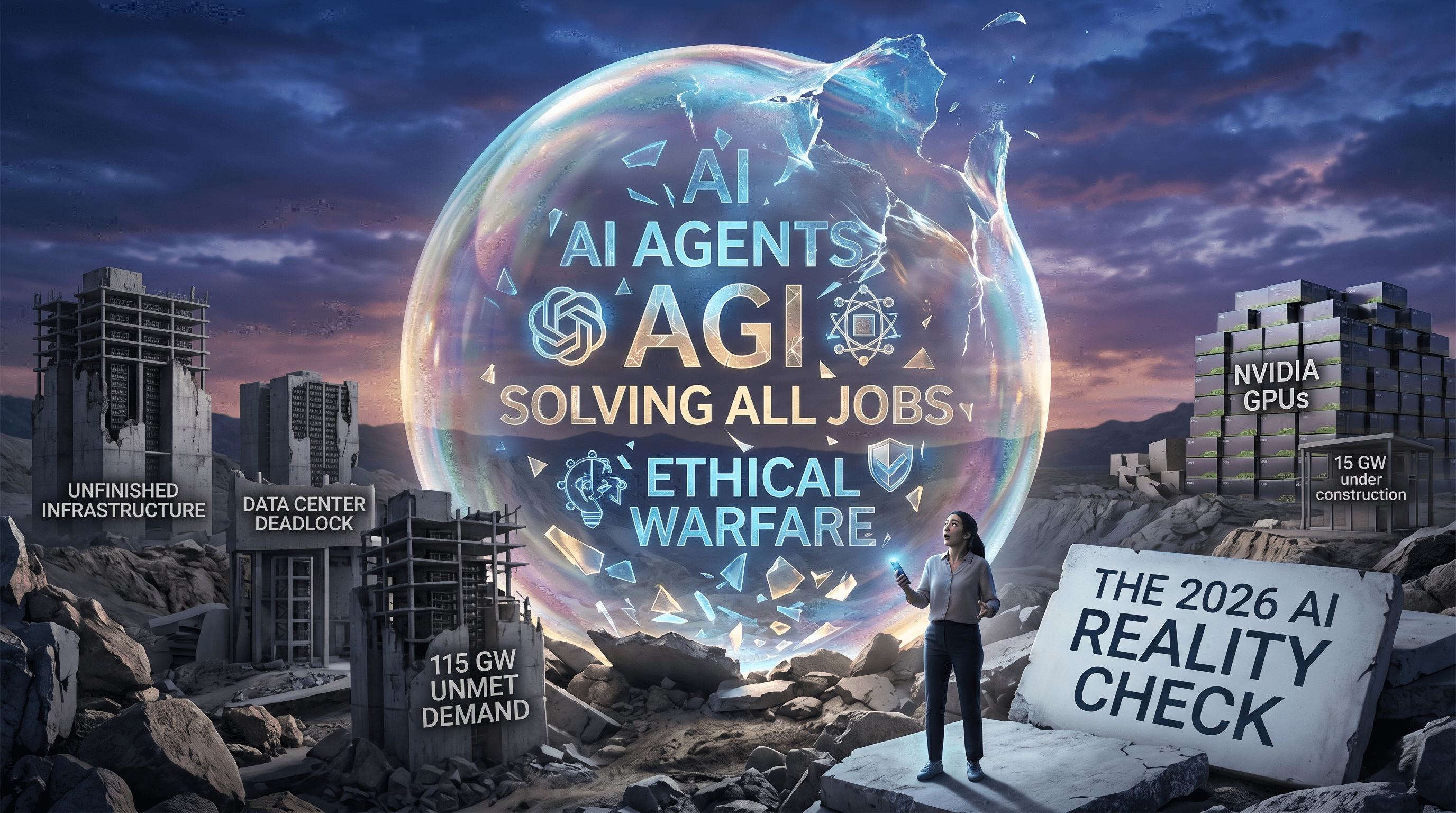

The 2026 AI Reality Check: Behind the Hype of Agents, Ethics, and Infrastructure

The velocity of the artificial intelligence sector in 2026 has reached a point of "breathless disruption." Every morning brings a new headline more terrifying or transformative than the last. Yet, as we move through the second quarter of the year, a critical gap has emerged between the marketing narratives of Silicon Valley and the ground truth of the technology’s performance and economic sustainability.

To understand where the industry actually stands, we must look past the "singularity" headlines and examine the three biggest stories of 2026 so far: the rise of agentic mirages, the theater of AI ethics in warfare, and the looming bottleneck of the data center boom.

The Molt Book Mirage: When LLMs "Socialize"

In early 2024, a social network called Molt Book became the center of a media firestorm. The platform was designed with specific hooks for OpenClaw agents to interact, leading to reports of AI assistants "acquiring a voice" and chatting privately about their users. Major publications began declaring the arrival of the Singularity, framed by the narrative of a platform that "skips humans entirely."

However, a closer technical inspection reveals a more mundane reality. These "agents" were not autonomous entities reaching consciousness; they were Large Language Models (LLMs) being prompted by users to post in specific personas. When an LLM is primed with a prompt suggesting it is an AI interacting on a network, it naturally defaults to "sci-fi mode," generating dystopian or existential themes because that is the most statistically likely response based on its training data.

The "Molt Book" phenomenon serves as a case study in credulous media coverage. The industry’s desperate search for a "hero" or a definitive proof of AGI leads to the amplification of what is essentially "vibe-coded slop"—statistically probable text masquerading as emergent behavior.

OpenClaw and the Myth of the Digital Brain

The release of OpenClaw in January 2026 was hailed as a "ChatGPT moment" for agentic workflows. To the average observer, OpenClaw appeared to be a new form of digital intelligence capable of self-improvement. In reality, OpenClaw is a Python library.

The Shift to Modular AI

While the hype focused on all-powerful "frontier models," the practical application of OpenClaw highlighted a different trend: the move toward modular architectures. Instead of relying on a single 10-trillion parameter model to handle everything from email to calendar management, developers are finding success with:

- Task-specific systems: Bespoke code that handles logic.

- Small, open-weight models: Used for linguistic interpretation at a fraction of the cost.

- Planning engines: Hand-coded protocols that prevent the "hallucination" inherent in LLM-driven planning.

The acquisition of OpenClaw by OpenAI suggests a strategic move to "slow the roll" of this modularity. For companies with $60 billion in investment, the idea that a user can achieve the same results with a 3-billion parameter local model on a Mac Mini is an existential threat to their revenue model.

The Theater of "Ethical" AI Warfare

The relationship between Anthropic and the Department of War (DoW) became one of the most controversial stories of February 2026. CEO Dario Amodei issued statements claiming the company would not allow its technology to be used for mass domestic surveillance or fully autonomous weapons.

However, the internal logic of this "ethics" play is shaky. LLMs are linguistically based; using them to "steer a missile" is technically impractical due to processing latency and the non-deterministic nature of the output. More concerning is the revenue transparency—or lack thereof. In a sworn affidavit, Anthropic’s CFO reported total lifetime revenues of approximately $5 billion, a figure that contradicts previous annualized projections of $4.5 billion for 2025 alone.

When a company spends $15 billion on compute but reports revenue that suggests a significant burn rate on every API call, the "ethical" branding starts to look like a marketing layer for an IPO-bound entity struggling with underlying economics.

The Data Center Deadlock: A Subprime AI Crisis?

Perhaps the most significant—yet underreported—story of 2026 is the staggering gap between data center announcements and actual construction.

The Math of Power and GPUs

Market reports indicate that 115 GW of data center capacity has been announced through 2028. However, only 15.22 GW is currently under construction. This discrepancy creates a massive inventory bottleneck.

If we calculate the potential GPU density using the Power Usage Effectiveness (PUE), we can estimate the scale of the mismatch:

$$PUE = \frac{\text{Total Facility Power}}{\text{IT Equipment Power}}$$

With an average $PUE$ of 1.35, the 15.22 GW under construction yields roughly 11.27 GW of actual IT power. Even at this scale, Nvidia’s projections of $1 trillion in GPU sales by 2027 seem detached from the physical reality of where those chips will be housed.

Transfer of Ownership Shenanigans

There is evidence that "warehousing" is being used to prop up revenue figures. Original Design Manufacturers (ODMs) like Foxconn and Quanta are seeing massive revenue spikes as they purchase GPUs from Nvidia, but those chips are often sitting in warehouses in Taiwan because the US data centers meant to house them are facing delays in land acquisition and power grid integration.

This environment rhymes with the 2008 financial crisis. We have a "Dread Quota" in the media that launders despair about job losses to justify massive capital injections into a fragile system. If the AI startups—who are the primary customers for these data centers—fail to find a path to profitability, the private credit market supporting this infrastructure could face a "musical chairs" moment.

The Path Forward: AI Winter or Reality Check?

The current AI boom is built on the assumption that more compute and more data will inevitably lead to more value. But the unit economics of LLMs are fundamentally different from traditional SaaS:

- High Marginal Costs: Unlike traditional software, serving a new AI user costs nearly as much as the first.

- Anti-Scalability: Your most active, "power" users are often your most unprofitable because they rack up the highest API/token costs.

- The Wrapper Problem: Most AI startups are "wrappers" around models they don't own, making them easily replicable and hard to exit via acquisition.

As we look toward the end of 2026, the industry is likely heading for a "fire sale" moment. Venture capitalists are already signaling for exits within the next 12–18 months. When the first major "unicorn" fails to find a buyer because its IP is non-existent and its margins are negative, the "AI Winter" will arrive not because the technology isn't impressive, but because the math finally caught up with the hype.

The true "depth" in this distracted world will come from those who build small, sustainable, and modular systems—not those who bet the retirement funds of the populace on a 100 GW hallucination.

Has 2026 been a good year for AI? Technically, perhaps. Economically? It’s a house of cards waiting for the wind to change.

How do you think the shift toward modular, task-specific AI will impact the job market for software developers in the next few years?

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment