Monitoring LLM Behavior: The Enterprise Guide to Conquering the Stochastic Challenge

An Insider's Report on Building the AI Evaluation Stack for Production

Traditional software engineering operates on a comforting premise of predictability: Input A passed through Function B will always yield Output C. This fundamental determinism is the bedrock upon which engineers have built decades of robust, reliable unit testing.

Generative Artificial Intelligence (AI) and Large Language Models (LLMs) completely shatter this paradigm. Generative AI is inherently stochastic and unpredictable. The exact same prompt sent to a model can yield a brilliant, nuanced answer on a Monday, and a complete hallucination on a Tuesday. This variability breaks the traditional unit testing frameworks that engineering teams know, trust, and rely upon.

To successfully ship enterprise-ready AI applications in the United States and globally, engineers can no longer rely on simple "vibe checks"—manual tests that look good in a developer environment but fail spectacularly under real-world customer traffic. Product builders must adopt a fundamentally new infrastructure layer: The AI Evaluation Stack.

Drawing from extensive experience shipping AI products for Fortune 500 enterprise customers in high-stakes, highly regulated industries, this report outlines the critical frameworks needed to monitor LLM behavior, prevent drift, and manage refusal patterns. In the enterprise sector, an AI "hallucination" isn't a funny quirk; it is a massive legal and compliance risk.

Defining the AI Evaluation Paradigm

Traditional software tests are binary assertions—they either pass or they fail. While some AI evaluations still utilize binary asserts, the vast majority must evaluate outputs on a gradient. An AI evaluation is not a single, isolated script. It is a highly structured pipeline of assertions, ranging from strict code syntax validations to highly nuanced semantic checks, all working together to verify the AI system’s intended function.

| Feature | Traditional Software Testing | AI Evaluation Stack |

| Nature of Output | Deterministic (Always the same) | Stochastic (Highly variable) |

| Testing Method | Binary Pass/Fail Unit Tests | Gradient Scoring & Assertion Pipelines |

| Tooling | Standard CI/CD scripts | LLM-as-a-Judge & Hybrid Telemetry |

| Failure Tolerance | Zero tolerance for syntax errors | Gradients of acceptability based on rubrics |

To build a pipeline that is both robust and cost-effective, assertions must be separated into two distinct architectural layers.

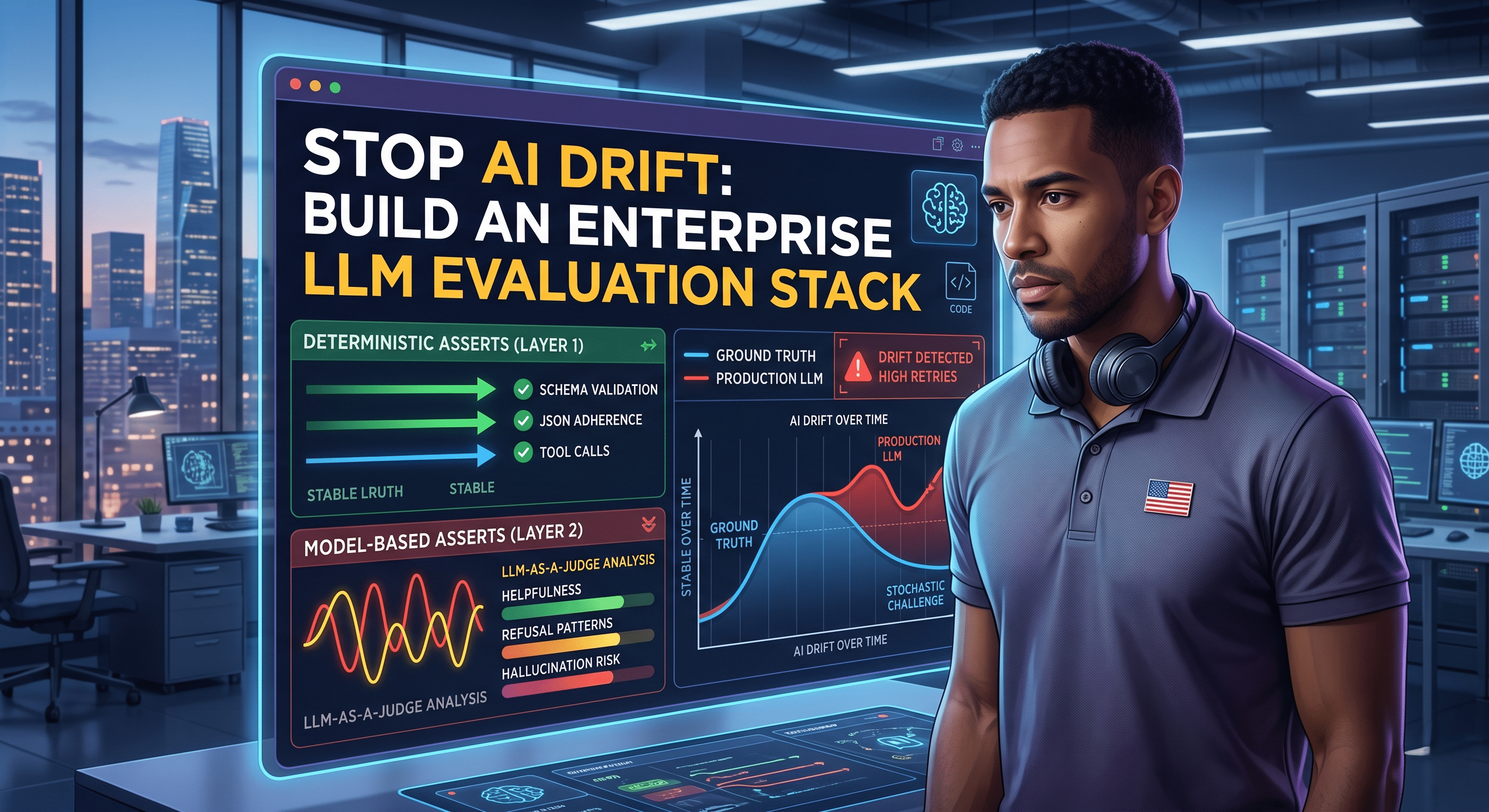

Layer 1: Deterministic Assertions

A surprisingly large share of production AI failures are not complex, semantic "hallucinations." They are basic, structural syntax and routing failures. Deterministic assertions serve as the pipeline's first line of defense, utilizing traditional code and Regular Expressions (Regex) to validate structural integrity.

Instead of asking a subjective question like whether a response is "helpful," Layer 1 assertions ask strict, unyielding binary questions:

- Did the model generate the correct JSON key/value schema?

- Did the AI invoke the correct tool call with the required arguments?

- Did the system successfully slot-fill a valid unique identifier (GUID) or email address?

Example: Layer 1 Deterministic Tool Call Assertion

JSON

In the scenario above, the test fails instantly. The model generated conversational text instead of the necessary API payload. Architecturally, deterministic assertions must operate on a computationally inexpensive "fail-fast" principle. If a downstream API requires a specific JSON schema, a malformed string is a fatal error. By failing the evaluation immediately at Layer 1, engineering teams prevent the pipeline from triggering expensive semantic checks or wasting valuable human review time.

Layer 2: Model-Based Assertions

When an AI output successfully passes Layer 1 deterministic checks, the pipeline must then evaluate semantic quality. Because natural language is incredibly fluid, traditional code cannot easily assert if a response is "empathetic," "accurate," or "actionable."

This reality introduces model-based evaluation, industry-known as LLM-as-a-Judge. While using one non-deterministic system to evaluate another might feel counterintuitive to a traditional developer, it is an exceptionally powerful architectural pattern for enterprise use cases requiring high nuance.

Human reviewers are excellent at discerning this nuance, but human review simply cannot scale to evaluate tens of thousands of automated CI/CD test cases. Therefore, the LLM-as-a-Judge becomes the scalable proxy for human discernment.

Three Critical Inputs for Model-Based Assertions

Model-based assertions only yield reliable, actionable data when the judging LLM is provisioned with three specific inputs:

- A State-of-the-Art Reasoning Model: The judging model must possess superior reasoning capabilities compared to the production model. If your consumer-facing app runs on a smaller, highly optimized model to reduce latency, the judge must be a frontier-tier reasoning model to accurately approximate human-level assessment.

- A Strict Assessment Rubric: Vague evaluation prompts (e.g., "Rate how good this answer is from 1 to 5") yield noisy, stochastic evaluations. A robust rubric explicitly defines the precise gradients of failure and success. For instance, a "Helpfulness" rubric should define a Score of 1 as an irrelevant refusal, a Score of 2 as addressing the prompt but lacking actionable steps, and a Score of 3 as providing actionable next steps strictly within the provided context window.

- Ground Truth (Golden Outputs): While the rubric provides the rules of the game, a human-vetted "expected answer" acts as the answer key. When the LLM-Judge can compare the production model's output directly against a verified Golden Output, its scoring reliability and consistency increase dramatically.

Architecture: The Offline vs. Online Pipeline

A robust, enterprise-grade evaluation architecture requires two complementary pipelines running in tandem. The offline pipeline provides the foundational baseline and deterministic constraints required to evaluate stochastic models safely before launch. The online pipeline monitors post-deployment telemetry in the wild.

The Offline Evaluation Pipeline

The primary objective of the offline pipeline is regression testing—identifying failures, behavioral drift, and latency issues before they reach production. Deploying an enterprise LLM feature without a gating offline evaluation suite is a massive architectural anti-pattern; it is the AI equivalent of merging uncompiled code directly into a main deployment branch.

1. Curating the Golden Dataset

The lifecycle begins by curating a "golden dataset"—a static, version-controlled repository containing 200 to 500 test cases representing the AI's entire operational envelope. Each case pairs an exact input payload with an expected "golden output" (ground truth). This dataset must reflect expected real-world traffic distributions in the US market, incorporating "happy-path" interactions, extreme edge cases, jailbreak attempts, and adversarial inputs. Evaluating a model's refusal capabilities under stress is a strict compliance requirement.

While synthetic data generation can accelerate this process, relying entirely on AI-generated test cases introduces massive risks of data contamination and bias. A Human-in-the-Loop (HITL) architecture is mandatory here.

2. Defining Evaluation Criteria and Short-Circuit Logic

Engineers must compute a composite score for each model output by assigning weighted points across a hybrid of Layer 1 and Layer 2 asserts.

Consider an AI agent designed to send an email. The evaluation framework might utilize a 10-point scoring system requiring an 8/10 to pass. Crucially, the pipeline must enforce strict short-circuit evaluation. If the model fails a critical deterministic assertion (like generating a broken JSON schema), the system instantly fails the entire test case (0/10). There is zero architectural value in paying an LLM-Judge to assess the semantic politeness of an email if the underlying API call is structurally broken.

3. Executing the Pipeline and Aggregating Signals

Integrated as a blocking CI/CD step during a pull request, the infrastructure iterates through the golden dataset. For enterprise-grade applications, the baseline pass rate must typically exceed 95%, scaling to 99%+ for strict compliance or high-risk domains like healthcare or finance.

4. Assessment, Iteration, and Alignment

Based on aggregated failure data, teams conduct root-cause analyses. This drives iterative updates to system prompts, tool descriptions, or hyperparameters. Because LLMs are non-deterministic, fixing one specific edge case can easily cause unforeseen degradations elsewhere. Therefore, any system modification necessitates a full regression test.

The Online Evaluation Pipeline

While the offline pipeline is the strict pre-deployment gatekeeper, the online pipeline is the post-deployment telemetry system. Its objective is to monitor real-world user behavior, capture emergent edge cases, and quantify model drift over time.

Architects must instrument applications to capture distinct categories of telemetry:

- Explicit User Signals: Direct feedback like "thumbs up/down." Disproportionate negative feedback is the most critical leading indicator of system degradation. Verbatim in-app feedback must be parsed to identify novel failure modes.

- Implicit Behavioral Signals: Silent failures where users abandon the workflow. High regeneration and retry rates indicate the AI failed to resolve the user's intent. Artificially high refusal rates ("I can’t do that") indicate over-calibrated safety filters rejecting perfectly benign user queries.

- Production Deterministic Asserts (Synchronous): Because code checks execute in milliseconds, teams can seamlessly reuse Layer 1 offline asserts to synchronously evaluate 100% of production traffic. Anomalous spikes in malformed outputs are the earliest warning sign of silent model drift or provider-side API changes.

- Production LLM-as-a-Judge (Asynchronous): Production LLM-Judges must never execute synchronously on the critical path, which would double latency and compute costs. Instead, if Data Privacy Agreements (DPAs) permit, a background LLM-Judge asynchronously samples a fraction of daily sessions, grading outputs against the offline rubric to generate a continuous quality dashboard.

Engineering the Feedback Loop (The "Flywheel")

AI evaluation pipelines are not "set-it-and-forget-it" infrastructure. Without continuous updates, static datasets suffer from rapid concept drift as US consumer behavior evolves and customers discover novel use cases.

To make the system smarter over time, engineers must architect a closed feedback loop:

- Capture: A user triggers an explicit negative signal or an implicit behavioral flag in production.

- Triage: The specific session log is automatically flagged and routed for human review.

- Root-Cause Analysis: A domain expert investigates the failure and updates the AI system (prompts, RAG context, etc.) to handle similar requests.

- Dataset Augmentation: The novel user input, paired with the newly corrected expected output, is appended to the offline Golden Dataset alongside several synthetic variations.

- Regression Testing: The model is continuously re-evaluated against this newly discovered edge case in all future deployment runs.

Building an evaluation pipeline without continuously monitoring production logs and updating datasets is fundamentally insufficient. It creates a dangerous illusion: high offline pass rates masking a rapidly degrading real-world user experience.

Conclusion: The New "Definition of Done"

In the modern era of generative AI, a software feature or enterprise product is no longer "done" simply because the code compiles and the LLM prompt returns a coherent response in a developer console.

An AI feature is only truly done when a rigorous, automated evaluation pipeline is deployed, stable, and acting as a gatekeeper. The model must consistently pass against both a curated golden dataset and a constantly evolving repository of newly discovered production edge cases.

This report serves as your blueprint for building that reality. From architecting offline regression pipelines to managing online telemetry and the continuous feedback flywheel, you now have the structural foundation required to deploy AI systems with absolute confidence. It is time to stop guessing whether your models are degrading in production, and start measuring.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment