The AI Agent Shift: OpenClaw Adopts DeepSeek V4 as the Tech World Assesses Huawei’s Bold Hardware Move

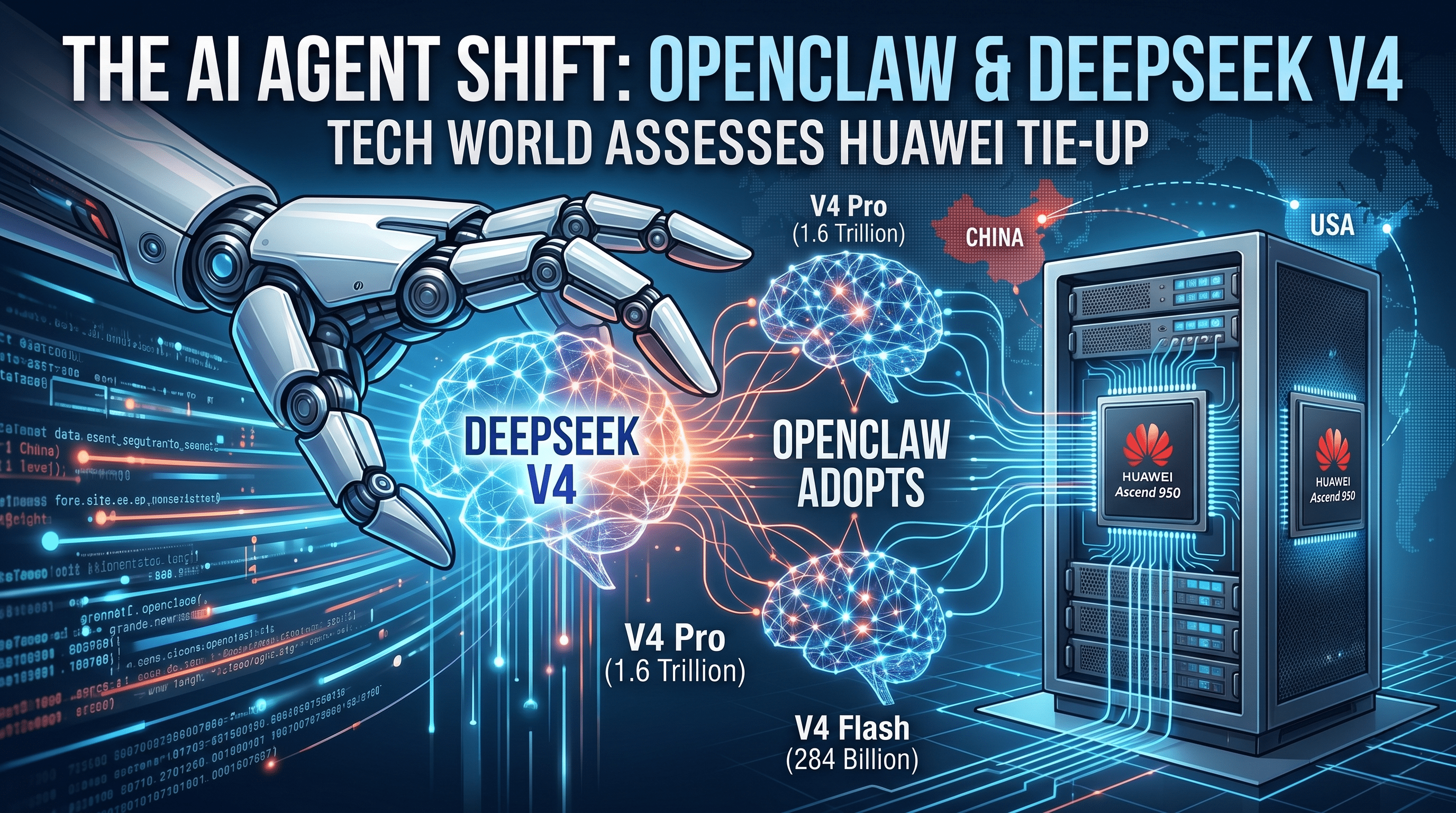

The artificial intelligence landscape is undergoing another seismic shift this week. In a move that highlights the rapidly accelerating capabilities of open-source AI, the highly popular AI agent OpenClaw has officially adopted DeepSeek’s newly released V4 models. While software developers and tech enthusiasts in the United States are already taking advantage of these powerful new tools, the broader global tech community—from Silicon Valley boardrooms to Washington think tanks—is heavily scrutinizing the underlying geopolitical implications.

At the center of this conversation is a deepening, highly optimized tie-up between DeepSeek and Chinese telecommunications and hardware giant Huawei Technologies. As the US tech ecosystem watches closely, the integration of these models into everyday agentic workflows presents a fascinating case study in both software innovation and hardware resilience.

OpenClaw’s Strategic Pivot: Enhancing the Autonomous Agent

In a major update rolled out this past Sunday, OpenClaw announced that it has fundamentally expanded its model catalog. The platform has officially adopted DeepSeek’s V4 Flash as its default operational model, while also offering access to the massive, flagship V4 Pro model for users requiring heavy-duty computational reasoning.

For the uninitiated, OpenClaw has become a staple for developers and businesses looking to automate complex, multi-step digital tasks. The integration of the V4 models brings several critical upgrades to the platform's ecosystem:

- Multi-Step Task Consistency: One of the most significant hurdles in autonomous AI agents is "hallucination" or losing context during prolonged, multi-stage workflows. OpenClaw noted that it has heavily optimized how the DeepSeek V4 models maintain rigid consistency from the first step of a task to the last.

- Workflow Integrations: Beyond just upgrading the underlying brain of the agent, OpenClaw has integrated new functional capabilities, including seamless operation within Google Meet. This pushes the agent further into enterprise utility, allowing it to interact directly with standard US corporate tech stacks.

This move underscores a growing trend among AI agent platforms: shifting away from exclusive reliance on proprietary Western models and embracing highly capable, cost-effective open-source alternatives.

Decoding DeepSeek V4: Massive Parameters and Ecosystem Synergy

OpenClaw’s software update came just two days after Hangzhou-based DeepSeek officially open-sourced its V4 models, sending ripples through the global developer community. The sheer scale of these models is a testament to the rapid pace of algorithmic development.

- DeepSeek V4 Pro: The flagship model boasts an astonishing 1.6 trillion parameters, making it the company’s largest and most complex release by that metric to date. Models of this size are designed to handle immense contextual reasoning, advanced coding tasks, and deep data analysis.

- DeepSeek V4 Flash: The leaner, highly efficient V4 Flash model operates with 284 billion parameters. It is designed for speed and low-latency responses, making it the perfect default engine for an agent like OpenClaw that needs to execute rapid commands.

Furthermore, DeepSeek designed the V4 architecture with immediate ecosystem integration in mind. On Friday, the company confirmed that the V4 models were explicitly optimized for mainstream agent tools right out of the gate. This includes not just OpenClaw, but also Tencent Holdings’ CodeBuddy and, notably, Anthropic’s Claude Code—highlighting that Chinese developers are actively building tools meant to interface seamlessly with top-tier American AI infrastructure.

The Huawei Connection: Testing China's Home-Grown Hardware

While the software metrics are impressive, the hardware story is what has captured the attention of industry analysts and policymakers in the US. The DeepSeek V4 release is not just a software milestone; it is serving as a high-stakes stress test for China’s domestic AI hardware, specifically through a major collaboration with Huawei.

Huawei announced "full support" for the V4 models via its proprietary Ascend chips and supernode systems. Specifically, Huawei confirmed that its newest Ascend 950PR and 950DT chips achieved critical “day zero” adaptation. This means Huawei's hardware was ready to serve the DeepSeek V4 model for inference (the process of a trained model generating responses) the moment the software went live.

In the context of strict US export controls that restrict the flow of top-tier Nvidia GPUs to China, this level of domestic hardware-software synergy is being viewed as a major breakthrough by Chinese state media. Yuyuan Tantian, an influential social media account operated by state broadcaster CCTV, heralded the Ascend-compatible models as a definitive “signal of advancement in software-hardware collaboration.”

The state-backed commentary also offered a surprisingly candid assessment of the current hardware reality, acknowledging the gap with Western technology while highlighting a strategic pivot: "Although China is currently trailing in process nodes and single-card performance, we will explore new solutions under existing constraints by leveraging system design, cluster architecture, hardware-software synergy, and power efficiency."

The Inference vs. Training Debate: What Lies Ahead?

For tech analysts in the USA, the critical question remains: Were Huawei’s chips just used for inference, or are they now capable of handling the immensely more demanding process of training a 1.6-trillion-parameter model?

DeepSeek has kept the exact hardware stack used to train V4 closely guarded. However, their technical report noted that the development of “kernels”—the vital codes that dictate the functions of graphics processing units (GPUs)—was carefully adapted for both Nvidia and Huawei chips.

According to a Friday report by Reuters, there are industry claims that Huawei chips were indeed used for at least a portion of the V4 Flash’s training process, a task that fundamentally requires more advanced computational power than everyday inference. When pressed on these claims over the weekend by the South China Morning Post, Huawei declined to comment.

Hing Shing Leung, a prominent equity analyst based in Hong Kong, weighed in on the uncertainty. While stating he specifically “couldn’t find any proof that Ascend was used to train V4 Flash,” Leung emphasized that DeepSeek’s technical documentation reveals a rapidly deepening integration between the software developer and the hardware giant.

This growing partnership, Leung noted, serves as a strong “possible indication that the Ascend 950 will be used to train its model in the future.”

Implications for the US Tech Landscape

For the American technology sector, the OpenClaw and DeepSeek V4 developments offer a dual narrative. On the software side, US developers benefit from the release of incredibly powerful, open-source AI models that can be freely integrated into applications, driving down costs and spurring innovation in the autonomous agent space.

On the hardware and geopolitical side, the seamless integration of these models with Huawei’s Ascend architecture proves that US export restrictions are forcing a rapid maturation of China's domestic semiconductor ecosystem. As AI models become less reliant on raw single-card computing power and more reliant on clever cluster architecture and optimized algorithms, the global AI race is shifting from a pure hardware sprint into a complex marathon of efficiency.

As OpenClaw users begin to automate their workflows and optimize their Google Meet schedules with DeepSeek V4 this week, they are unknowingly participating in the next great chapter of global technological competition.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment