OpenAI Supercharges AI Development: A Deep Dive into the Updated Agents SDK and Secure Sandbox Execution

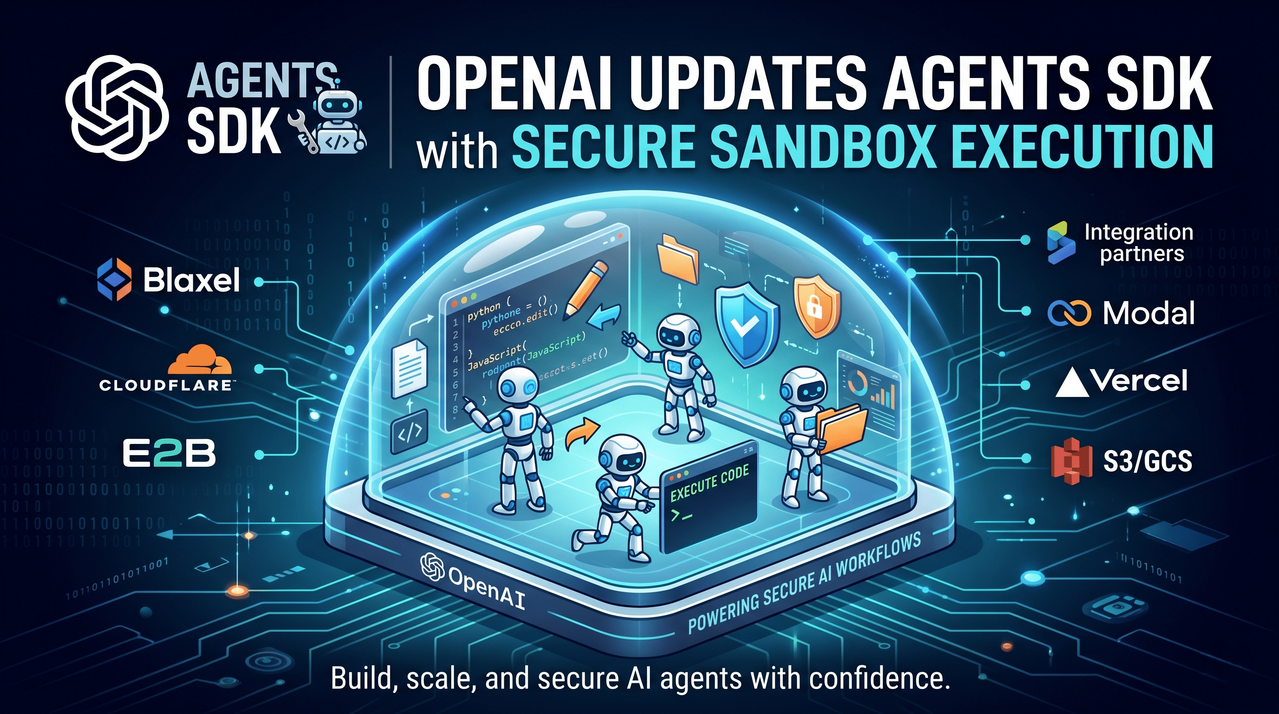

The landscape of artificial intelligence is rapidly shifting from passive, conversational models to active, autonomous agents capable of executing complex, multi-step workflows. Bridging the gap between conceptual AI prototypes and production-ready, secure enterprise applications has historically been a massive hurdle for developers. Today, OpenAI has addressed this friction head-on with a major update to its Agents SDK, introducing a standardized infrastructure that empowers developers to build highly capable agents designed to inspect files, execute commands, edit code, and manage intricate tasks entirely within deeply controlled sandbox environments.

This comprehensive report breaks down the architectural advancements, security enhancements, and enterprise implications of the newly updated OpenAI Agents SDK.

The Evolution of Agentic Workflows: Moving from Prototype to Production

To understand the significance of this update, it is crucial to examine the limitations of existing AI deployment systems. As engineering teams transition their AI agents from local prototypes to scaled production environments, they frequently encounter architectural bottlenecks.

Historically, developers have had to choose between three imperfect paradigms:

- Model-Agnostic Frameworks: While these offer high flexibility and avoid vendor lock-in, they frequently fail to fully leverage the cutting-edge, native capabilities of frontier models.

- Model-Provider SDKs: These keep developers closer to the model's core logic but often restrict visibility into the underlying harness, making debugging and custom orchestration difficult.

- Managed Agent APIs: These platforms drastically simplify the deployment process but impose strict limitations on where the agents can run, how they process telemetry, and, crucially, how they access sensitive, proprietary enterprise data.

The newly updated OpenAI Agents SDK dismantles these barriers by offering a unified, model-native harness that natively integrates with tools, files, and sophisticated execution environments without sacrificing developer visibility or data security.

Unpacking the New Harness Architecture

At the core of this update is a completely revamped model-native harness. This architecture is explicitly designed to keep the agents closely aligned with the model’s natural operating patterns, thereby dramatically improving reliability for long-running, coordinated tasks that span multiple tools and external systems.

By providing standardized integrations with common agent system primitives, the harness allows engineering teams to focus their resources on writing domain-specific business logic rather than burning cycles building foundational infrastructure from scratch.

According to OpenAI, the harness introduces an impressive suite of configurable primitives:

"These primitives include tool use via MCP (Model Context Protocol), progressive disclosure via skills, custom instructions via AGENTS.md, code execution using the shell tool, file edits using the apply_patch tool, and more."

Key features of the new harness architecture include:

- Configurable Memory Systems: Allowing agents to maintain context over highly extended operational timeframes.

- Sandbox-Aware Orchestration: Agents can intuitively understand the boundaries and capabilities of their execution environments.

- Advanced Filesystem Tools: Bringing capabilities similar to OpenAI's Codex directly into the agent's native workflow, allowing for seamless file manipulation and code refactoring.

Enterprise Validation: The LexisNexis Use Case

The enterprise impact of this unified framework is already being realized by industry leaders dealing with highly sensitive, complex data workflows.

Min Chen, Chief AI Officer at LexisNexis, highlighted the transformative nature of the SDK for the legal sector:

"The OpenAI Agents SDK has enabled complex legal drafting and workflows by providing a unified framework with built-in safeguards and secure, isolated environments for data processing and code execution. This allows teams to focus on developing high-value, long-running legal agents rather than building agent infrastructure from scratch."

Native Sandbox Execution: Security Meets Portability

Perhaps the most highly anticipated feature of this update is the native sandbox execution layer. Running AI-generated code autonomously inherently carries significant risk. In the past, developers had to construct custom, isolated virtual machines or containers to prevent agents from accidentally—or maliciously—compromising host systems.

The updated Agents SDK fundamentally solves this by building sandbox execution directly into the workflow. Agents now run in tightly controlled, isolated environments with explicitly granted access to specific files, tools, and required dependencies. Developers can choose to connect their own custom sandbox infrastructure or utilize the out-of-the-box integrations with leading cloud compute and sandbox providers, including:

- Blaxel

- Cloudflare

- Daytona

- E2B

- Modal

- Runloop

- Vercel

The Manifest Abstraction: Redefining Workspace Portability

To ensure that these execution environments remain truly portable across different cloud providers, OpenAI has introduced the Manifest abstraction. This acts as a standardized blueprint for the agent’s workspace.

Through the Manifest, developers can dynamically mount local files, strictly define output directories, and establish secure connections to major enterprise storage providers, including:

- AWS S3

- Google Cloud Storage (GCS)

- Azure Blob Storage

- Cloudflare R2

This SDK design provides a highly consistent methodology for defining the agent’s environment. It grants the underlying AI models a perfectly predictable workspace with clearly defined inputs, outputs, and organizational structures necessary for executing long-running, asynchronous tasks.

Designing for Resilience: Security, Reliability, and Scaling

In the modern threat landscape, AI systems must be designed under the assumption that they will face prompt injection attacks and data exfiltration attempts. OpenAI has engineered the updated SDK with a rigorous "security-first" philosophy.

Credential Separation: By strictly separating the execution harness from the underlying compute layers, the SDK ensures that sensitive API keys, database credentials, and system tokens are kept entirely outside of the model-generated execution environments. Even if an agent is compromised via a sophisticated prompt injection, the attacker cannot easily extract host credentials.

Statefulness and Rehydration: Reliability at scale is further ensured through externalized agent state management. If a sandbox container crashes or is unexpectedly lost due to a server failure, the agent's run does not fail permanently. Instead, the Agents SDK utilizes built-in snapshotting and rehydration capabilities to automatically restore the agent's state in a brand-new container, seamlessly continuing the workload from the exact last checkpoint.

Parallel Execution: For massive workloads, agent runs are no longer confined to a single linear process. Developers can configure agents to utilize multiple sandboxes simultaneously, invoking new isolated environments on demand, routing specific subagents to specialized containers, and executing distinct tasks in parallel for vastly accelerated compute times.

Availability and Future Roadmap

The new harness and sandbox capabilities are launching first for Python, reflecting its dominance in the AI engineering ecosystem. However, OpenAI has confirmed that comprehensive TypeScript support is already slated for a future release, ensuring web and full-stack developers can seamlessly integrate these tools into Node.js and frontend-adjacent environments.

Looking ahead, OpenAI has teased additional powerful features currently in active development for both languages, including a dedicated Code Mode for enhanced software engineering tasks and advanced Subagent orchestration for complex, hierarchical task delegation.

These updated Agents SDK capabilities are generally available right now via the OpenAI API. In a move that favors predictable developer costs, the service follows standard API pricing, billed transparently based on token consumption and tool usage.

By standardizing the infrastructure required for secure, capable, and scalable AI agents, OpenAI is not just updating an SDK; they are laying the foundational plumbing for the next major leap in enterprise automation.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment