Google Gemma 4: The Massive April 2nd Drop That Redefines Open-Source AI

The landscape of open-source artificial intelligence just experienced a seismic shift. On April 2, 2026, Google officially released Gemma 4, a family of four open models built on the revolutionary Gemini 3 architecture.

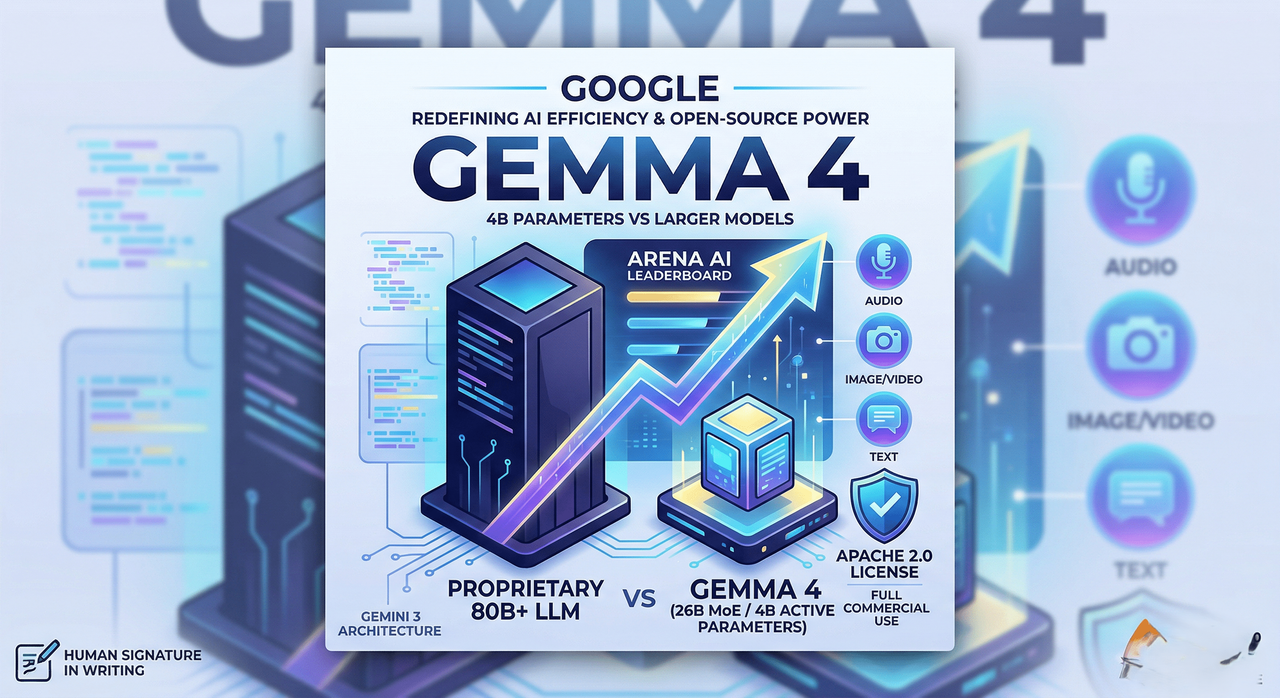

For those of us following the industry, this isn't just another incremental update. This is Google planting a flag in the ground for the "open" movement. By shipping these models under a genuine Apache 2.0 license, Google has removed the fine-print shackles that have plagued other "open" releases, offering full commercial freedom to developers and enterprises alike.

What makes Gemma 4 truly startling isn't just the license; it’s the efficiency. One of the models, despite having only 4 billion active parameters, is currently outperforming models 20 times its size on the Arena AI leaderboard. Here is a deep dive into the architecture, performance, and implications of the Gemma 4 release.

The Gemma 4 Lineup: Power for Every Tier

Google has optimized this release for four distinct compute tiers, ensuring that whether you are running a massive server farm or a Raspberry Pi, there is a Gemma 4 variant for you.

1. The 31B Dense Model: The Heavyweight

At the top of the stack is the 31B Dense model. Boasting 31 billion parameters and a massive 256K context window, this model currently sits at number three among all open models on the Arena AI text leaderboard with a score of approximately 1452. It is designed for the most demanding reasoning tasks where depth of knowledge is paramount.

2. The 26B MoE: The Headline Act

Perhaps the most impressive feat of engineering in this release is the 26B Mixture of Experts (MoE) model. While it has 26 billion total parameters, it only activates 4 billion parameters during inference. This allows it to achieve a leaderboard score of 1441 while consuming only a fraction of the compute required by its peers. Google claims this model provides the performance of a flagship model like GLM5 but with 30 times fewer active parameters.

3. The Edge Champions: E4B and E2B

For local and mobile deployment, Google introduced the E4B (8B total, 4.5B effective) and the E2B (5.1B total, 2.3B effective). Both support 128k context windows and are designed to run on devices like the Jetson Nano or high-end smartphones with near-zero latency.

Architectural Innovations: Under the Hood

The reason Gemma 4 "punches above its weight" comes down to three specific architectural breakthroughs that deviate from standard transformer designs.

Parallel Embeddings

In a traditional decoder, information flows through a residual stream. Gemma 4 introduces a second embedding table that feeds residual signals directly into every decoder layer. This "lower dimensional conditioning pathway" gives each layer direct access to the original token identity information. It adds almost no compute cost but results in a measurable jump in output quality.

Shared KV Cache

To manage the massive 256K context window on consumer hardware, Google implemented a Shared KV Cache. The final "n" layers of the model reuse key-value states from earlier, non-shared layers. This dramatically slashes memory usage during inference. It’s the "secret sauce" that allows a 256K context to fit on hardware that previously would have buckled under the weight of such a large memory footprint.

Variable Aspect Ratio Vision Encoder

Unlike previous models that forced images into a square crop, the Gemma 4 vision encoder supports variable aspect ratios. Developers can now configure image token budgets—anywhere from 70 to 1,120 tokens. This allows a user to trade processing speed for visual detail on the fly, depending on the complexity of the image being analyzed.

The Multimodal Edge: Audio is Now Local

While the larger 31B and 26B models focus on text, image, and video, the edge models (E2B and E4B) have received a unique superpower: Native Audio Support.

By utilizing a USM-style conformer encoder, these tiny models can handle real-time speech understanding, transcription, and audio-based question answering locally on a mobile device. We are talking about a model with only 2.3 billion effective parameters doing live speech-to-text without an internet connection. This is a massive win for privacy-centric applications and field-work AI.

For video, all models process frames directly. They require no explicit video training to understand temporal actions or extract information from clips. Because they were trained on over 140 languages from the start (rather than being fine-tuned for multilingualism later), their global utility is unparalleled in the open-source space.

Built for Agents: Native Function Calling

Gemma 4 isn't just designed to chat; it’s designed to act. Google has built Native Function Calling into the core of these models.

Forget about "prompt engineering hacks" or forcing models to stick to a grammar; Gemma 4 outputs structured JSON tool calls natively. Developers can define tools as JSON schemas and pass them through the chat template. The model then decides when and how to call those tools. It even supports multimodal tool responses, meaning a model can receive an image back from a tool call and continue its reasoning process based on that visual data.

Deployment: Getting Gemma 4 Running

Google and the community have ensured that Gemma 4 is accessible from day one.

- llama.cpp: The fastest path for local inference. On Mac, a simple

brew installallows you to run the 26B quantized model as an OpenAI-compatible API on your localhost. - Hugging Face: All four variants are live. For those looking for chat and agent capabilities, look for the

-it(instruction-tuned) versions. - Apple Silicon (MLX): For Mac users, MLX provides native metal acceleration, making the E2B model run in under 6GB of memory.

- Google AI Studio: If you don't want to deal with local setup, the 31B and 26B models are available for browser-based testing immediately.

The Bottom Line: Who Is This For?

Gemma 4 represents a "no-compromise" approach to open-source AI.

- For Enterprises: The Apache 2.0 license ensures digital sovereignty. You can keep your data and inference on-premises without licensing headaches.

- For Developers: The native agentic workflows and function calling make it a drop-in replacement for local agent frameworks like Hermes or Open Code.

- For Researchers: The new Parallel Embedding (PLE) architecture and open weights provide a playground for pushing the boundaries of what small models can achieve.

Google Gemma 4 proves that "open" and "capable" are no longer mutually exclusive. By shrinking the gap between massive proprietary models and efficient open-weight models, Google has effectively changed the math on AI development for 2026 and beyond. If you’re looking for a model that is open, multimodal, and shockingly efficient, the wait is over.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment