The 90% Fallacy: Why Google’s AI Overviews Are Telling Millions of "Lies" Every Hour

In the fast-paced digital landscape of 2026, we have reached a point where we no longer "search" for information; we ask for it. At the heart of this transformation is Google’s AI Overviews, the Gemini-powered interface that has become the de facto front door of the internet. However, a startling new report suggests that while the technology is more advanced than ever, its relationship with the truth remains complicated—and potentially dangerous.

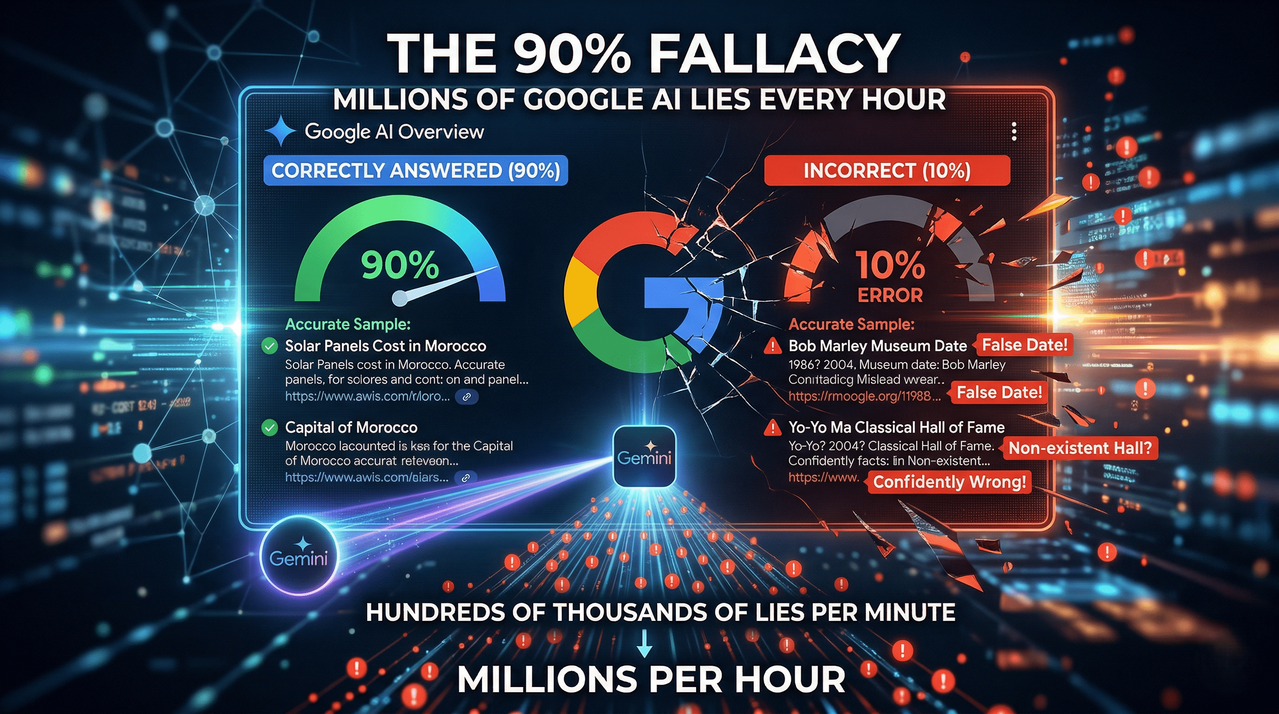

A recent deep-dive analysis conducted by The New York Times, in collaboration with the AI evaluation startup Oumi, has revealed a sobering statistic. While Google’s AI Overviews boast a 90% accuracy rate, that remaining 10% translates into an astronomical volume of misinformation. When scaled across billions of daily searches, Google is essentially broadcasting hundreds of thousands of "hallucinations" or flat-out lies every single minute.

The Benchmark Battle: From Gemini 2.5 to Gemini 3

To understand how we got here, we have to look at the metrics. The study utilized SimpleQA, a rigorous factuality benchmark released by OpenAI back in 2024. SimpleQA consists of over 4,000 questions with strictly verifiable answers, designed to trap AI models that prefer "sounding" right over "being" right.

According to the data provided by Oumi, the progress of Google’s models is visible but perhaps insufficient for a global search engine:

- Gemini 2.5 (Late 2025): Recorded an accuracy rate of approximately 85%.

- Gemini 3 (Current 2026): Showed a notable improvement, hitting a 91% accuracy mark.

On paper, a 91% success rate sounds like an "A" grade. But in the context of a search engine that serves as the world’s primary source of truth, a 9% failure rate is catastrophic. If you extrapolate these misses across the sheer volume of global traffic, AI Overviews are generating tens of millions of incorrect answers every day.

When "Confidence" Trumps "Fact"

The report highlights several glaring examples of where the AI stumbles, often with a level of confidence that makes the error hard to spot for the average user.

One specific test involved the history of Bob Marley’s former home and its conversion into a museum. Despite citing three different sources—including Wikipedia—the AI Overview struggled with conflicting dates. Rather than admitting uncertainty or presenting the debate, the system confidently selected the incorrect year.

Even more bizarre was the query regarding legendary cellist Yo Yo Ma. While the AI correctly identified his induction into the Classical Music Hall of Fame (even citing the organization’s own website), it simultaneously claimed in the text of the overview that the Classical Music Hall of Fame does not exist. It is this kind of internal logic collapse that keeps researchers awake at night: a system that can see the truth and deny it in the same paragraph.

The Corporate Rebuttal: Is the Test Flawed?

Google has not taken these findings lying down. Google spokesperson Ned Adriance issued a sharp critique of the Times report, suggesting that the SimpleQA benchmark itself is "full of holes."

Google’s argument is twofold. First, they claim that the questions in SimpleQA do not reflect "real-world" search behavior. In other words, people don't usually use Google to ask obscure trivia that requires pinpoint chronological accuracy; they use it for recipes, shopping, and local services. Second, Google prefers its own internal metric, SimpleQA Verified, which utilizes a smaller, more heavily vetted set of questions.

However, critics argue that this is a deflection. If a search engine can’t get a verifiable date right, how can it be trusted to summarize complex medical advice or financial news?

The Hidden Architecture: Speed vs. Truth

One of the most revealing aspects of this report is the glimpse it provides into Google’s internal trade-offs. AI Overviews are not a single, monolithic entity. As Google confirmed, the system uses the "right model for the right query."

While Gemini 3 Pro—the flagship model—is highly accurate, it is also computationally expensive and slow. To keep the search page feeling "snappy," Google often defaults to Gemini 3 Flash. While "Flash" is incredibly fast, it lacks the deep reasoning capabilities of its larger sibling.

This creates a "speed tax" on truth. Users receive a summary instantly, but that summary is more likely to be a hallucination because the system prioritized low latency over high-fidelity fact-checking.

The SEO and GEO Impact: Why This Matters for the Web

For digital marketers and SEO professionals, this report is a wake-up call. We are moving into a Geographic and Generative (GEO) era where being the "first blue link" no longer guarantees traffic. If Google’s AI summarizes your content—and does so incorrectly—it doesn't just rob you of a click; it potentially misrepresents your brand or data to millions of people.

Furthermore, the "grounding" of AI—connecting it to the live web—was supposed to be the cure for hallucinations. This study proves that grounding is not a silver bullet. The AI can "read" a webpage and still misunderstand the context, especially when faced with contradictory information or complex formatting.

Living with the "10% Lie"

As we move further into 2026, the disclaimer at the bottom of every Google search—"AI can make mistakes, so double-check responses"—is starting to feel less like a helpful tip and more like a legal shield.

The reality is that 90% accuracy is an incredible achievement for a generative model, but it may be an unacceptable standard for a search engine. As long as Google continues to push users toward AI-generated summaries instead of the original source links, the burden of fact-checking remains on the individual.

In the age of Gemini 3, we are learning a hard lesson: just because a machine speaks with the authority of the entire internet doesn't mean it’s telling the truth.

Author’s Note: As a writer who has watched the evolution of search from simple keywords to complex neural dialogues, I find the "90% trap" fascinating. We are so impressed by the 90% that is right that we overlook the 10% that could lead us astray. In our rush for convenience, we must be careful not to outsource our critical thinking to a model that is still, essentially, guessing the next most likely word.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment