Claude Code Exposed: Anthropic’s "Human Error" and the Critical Security Lessons for Modern DevOps

In the fast-paced arms race of artificial intelligence, companies like Anthropic are often viewed as the gold standard of safety and meticulous engineering. However, a recent incident involving their flagship AI coding tool, Claude Code, has reminded the industry that even the giants are susceptible to the most fundamental of developer oversights.

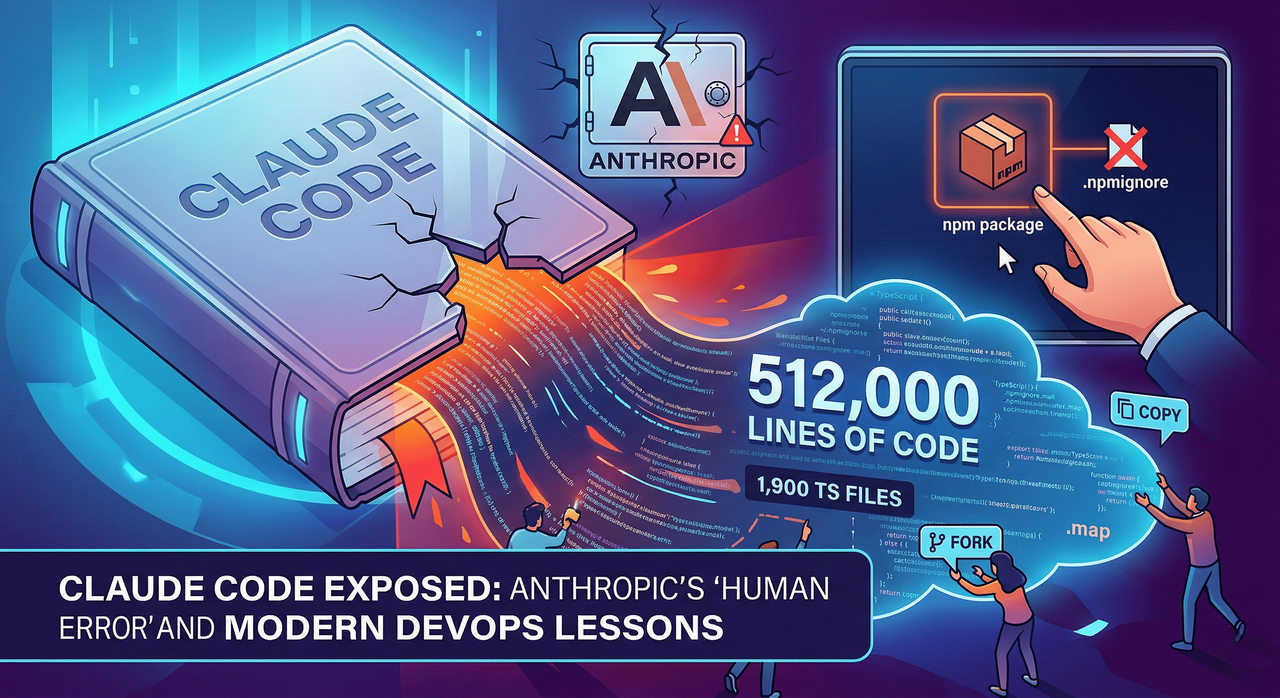

A simple misconfiguration in an npm package deployment led to the exposure of what appears to be the tool’s entire source code. This wasn't a sophisticated state-sponsored cyberattack or a complex breach of internal servers; it was a packaging error—a "human error"—that has left the AI and cybersecurity communities buzzing.

The Discovery: A Map to the Kingdom

The leak came to light on a Tuesday morning when security researcher Chaofan Shou identified a glaring anomaly in the official npm package for Claude Code. The package included a map file, a utility typically used by developers during the debugging process to map minified or bundled code back to its original source.

While map files are invaluable during development, they are widely considered a "no-go" for production environments because they essentially act as a blueprint for the original logic. In this case, the map file didn't just hint at the structure; it contained a direct reference to an unobfuscated TypeScript source hosted on Anthropic’s Cloudflare R2 storage bucket.

Once the link was discovered, it was only a matter of time before the community descended. The resulting zip archive was a treasure trove of intellectual property:

- Approximately 1,900 TypeScript files.

- Over 512,000 lines of code.

- Comprehensive libraries of slash commands and internal built-in tools.

The Viral Spread and the "CCLeaks" Factor

Within hours of the discovery, the source code was mirrored across the web. A GitHub repository containing snapshots of the code was forked more than 41,500 times, ensuring that even if Anthropic managed to scrub the original source, the "genie" was officially out of the bottle.

Interestingly, this wasn't the first time the community had tried to peek under the hood of Claude Code. A dedicated project known as CCLeaks had already been working on reverse-engineering the tool to uncover hidden features or unreleased functions. However, this leak provided an "official" comparison point. For the operators of CCLeaks and other curious developers, this wasn't just a leak; it was a massive update to their existing research, providing a direct look at the latest iterations of the tool without the guesswork of reverse engineering.

Technical Deep Dive: How Build Pipelines Fail

The technical community has spent the days following the leak analyzing exactly how a company of Anthropic's stature could make such a "rookie" mistake. Software engineer Gabriel Anhaia provided a deep dive into the exposed code, highlighting that the root cause likely resides in the build pipeline configuration.

In modern JavaScript and TypeScript development, the package.json file and the .npmignore file act as the gatekeepers of what gets published to the public registry. If these files are not strictly audited, internal files, test suites, or—as seen here—sensitive map files can slip through.

Common Pitfalls in Package Publishing

| Feature | Risk Factor | Proper Practice |

| Map Files (.map) | Exposes original source logic and comments. | Exclude from production builds; keep for internal logs only. |

| .npmignore | Failure to list internal docs or config files. | Use a "whitelist" approach via the files field in package.json. |

| Hardcoded Paths | Reveals internal server structures or bucket URLs. | Use environment variables and abstract storage paths. |

| Dev Dependencies | Bloats the package and adds unnecessary attack surfaces. | Ensure npm publish only includes production-ready assets. |

As Anhaia pointed out, "A single misconfigured .npmignore or files field in package.json can expose everything." It serves as a sobering reminder that as tools become more automated, the human oversight at the end of the pipeline remains the most critical link in the chain.

Anthropic’s Response: Accountability and Damage Control

To their credit, Anthropic did not hide behind corporate jargon. In a statement provided to The Register, a spokesperson confirmed that the incident was the result of human error during the release packaging process.

"Earlier today, a Claude Code release included some internal source code. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again."

The company was quick to emphasize that no customer data or credentials were involved in the exposure. While this mitigates the immediate privacy risk to users, the loss of intellectual property remains a significant blow. In an era where AI companies are fiercely protective of their "secret sauce," having 500,000 lines of code available for public scrutiny is a major setback.

The Legal and Ethical Grey Area

The aftermath of the leak has created a complex situation for developers who downloaded or forked the code. The original uploader on GitHub eventually repurposed the repository to host a Python-based feature port of Claude Code rather than the raw source files, citing concerns over legal liability and intellectual property theft.

However, the nature of the internet means that once data is public, it stays public. Hundreds of mirrors and forks remain active. For many in the infosec community, the ethical dilemma is clear: is it "fair game" to study leaked code from a multi-billion dollar corporation, or does it constitute a violation of trade secrets? While the legal answer is often "it's a violation," the practical reality is that thousands of developers are now studying Anthropic’s coding patterns, tool integrations, and safety protocols.

The Broader Context: A Tough Year for Anthropic

This leak comes at a sensitive time for Anthropic. The company has recently faced a series of challenges, including:

- Usage Limit Frustrations: Users have reported hitting usage caps "way faster than expected," leading to friction within the power-user community.

- Regulatory Pressure: Anthropic has been entangled in legal battles with the US government following unprecedented national security designations.

- Global Competition: The rise of high-performance AI models from Chinese competitors has put pressure on Anthropic to innovate faster, potentially leading to the "speed over safety" environment that allows packaging errors to occur.

Final Thoughts: A Wake-Up Call for the Industry

The Claude Code leak is a quintessential "teachable moment." It proves that no matter how advanced your AI is, your security is only as strong as your last npm publish.

For developers and DevOps engineers, the takeaway is clear: automate your audits. Relying on a human to remember to check a .npmignore file is a strategy destined for failure. Incorporating automated "leak detectors" and package size monitors into the CI/CD pipeline is no longer optional—it is a necessity.

Anthropic will likely recover from this, and their "safety-first" reputation may even be bolstered if they are transparent about the "measures" they are rolling out to prevent a recurrence. But for now, the source code of one of the world's most popular AI coding assistants remains a public case study in the importance of the fundamentals.

As we move deeper into the age of AI, let this be the reminder: the most sophisticated code in the world can still be undone by a single, misplaced file.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment