Navigating the 2026 LLMOps Landscape: A Definitive Guide to the Production Stack

The landscape of Artificial Intelligence has shifted dramatically over the last few years. If you look back at 2023 or 2024, "deploying an LLM" often meant simply wrapping an API call in a basic Python script and hoping for the best. Fast forward to 2026, and the honeymoon phase of "vibes-based" development is officially over.

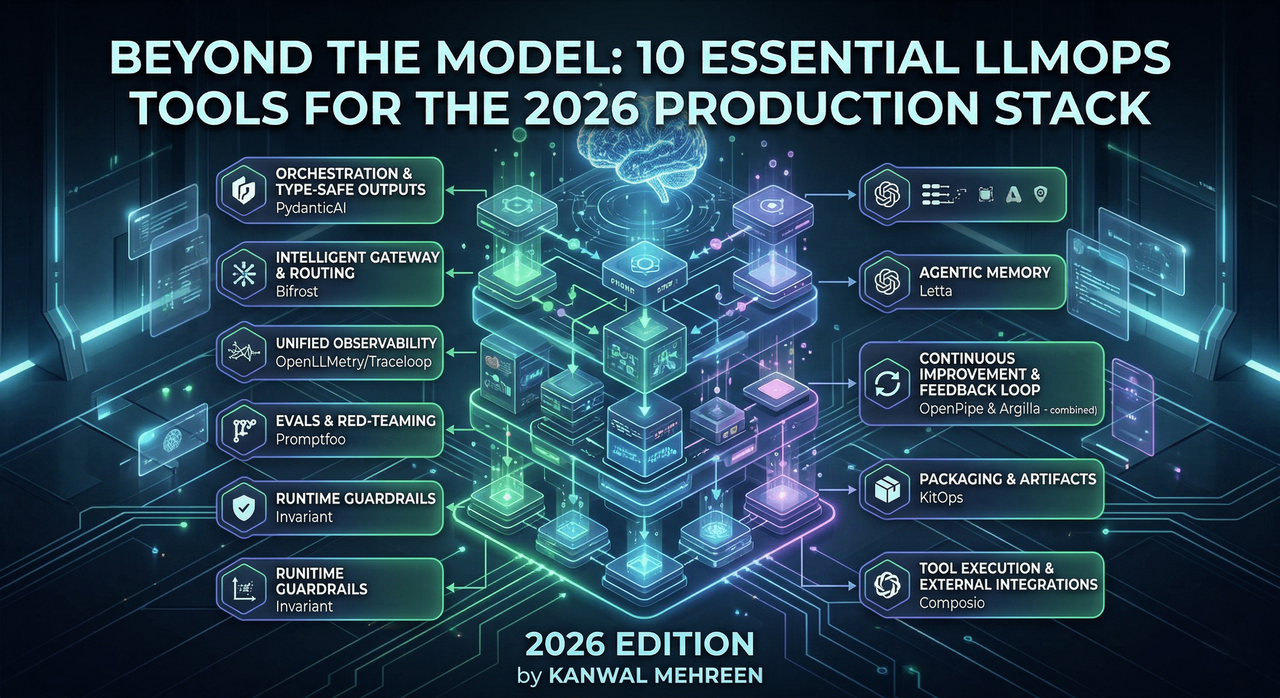

Today, Large Language Model Operations (LLMOps) has matured into a sophisticated engineering discipline. It is no longer just about picking the most powerful model; it is about building a resilient, observable, and scalable ecosystem around that model. We are now managing orchestration, complex routing, real-time guardrails, and persistent agentic memory.

For teams looking to move beyond simple demos and into robust production environments, the stack has become specialized. Below is a comprehensive report on the ten essential tools that are defining the LLMOps frontier in 2026.

1. PydanticAI: Bringing Sanity to Model Outputs

One of the greatest hurdles in early AI development was the unpredictability of model responses. In 2026, PydanticAI has emerged as the gold standard for teams that treat AI like software rather than a black box.

By leveraging type-safe outputs, PydanticAI allows developers to define strict schemas for what a model should return. This minimizes runtime errors and ensures that downstream applications don’t crash because a model decided to return a string instead of a JSON object. Crucially, it handles tool approvals and long-running workflows with built-in recovery mechanisms—essential for the complex, multi-step processes common in today’s enterprise applications.

2. Bifrost: The Intelligent Gateway Layer

As the number of model providers has exploded, managing direct integrations has become a nightmare. Bifrost acts as the mission control for your API traffic. It provides a unified interface to route requests across more than 20 providers, offering automated failover and load balancing.

What makes Bifrost stand out in 2026 is its performance. In high-scale environments—think 5,000 requests per second—Bifrost adds a negligible 11 microseconds of overhead. For companies running global, real-time applications, this level of efficiency combined with OpenTelemetry integration makes it an indispensable part of the infrastructure.

3. Traceloop / OpenLLMetry: Standardized Observability

You cannot fix what you cannot see. OpenLLMetry (via Traceloop) has successfully bridged the gap between traditional software monitoring and AI observability. Instead of forcing teams to adopt a proprietary "AI-only" dashboard, it plugs directly into the OpenTelemetry ecosystem.

This means your LLM traces, token usage, and prompt completions live alongside your standard application logs and metrics. In 2026, this level of unified visibility is critical for debugging "hallucinations" or performance bottlenecks that occur at the intersection of traditional code and model logic.

4. Promptfoo: Moving Beyond Manual Testing

The days of "eyeballing" prompt results are gone. Promptfoo has become the go-to open-source framework for systematic evaluations (evals) and red-teaming. It allows teams to run repeatable test cases against their models, ensuring that a change in a prompt doesn't accidentally degrade performance in another area.

By integrating Promptfoo into CI/CD pipelines, organizations can automate safety checks and performance benchmarks. This shifts the culture from "let's try this prompt" to "let's verify this prompt," bringing a much-needed level of rigor to the development lifecycle.

5. Invariant Guardrails: The Runtime Safety Net

As we grant agents more autonomy—letting them write files or call financial APIs—the risk of "rogue" behavior increases. Invariant Guardrails provides a dedicated layer of runtime rules that sit between your application logic and the model.

Unlike hard-coded logic, Invariant allows for dynamic policy enforcement. You can set boundaries on what an agent is allowed to do in real-time without constantly refactoring your core application code. In 2026, this "safety-first" architecture is a prerequisite for any agentic system interacting with real-world data.

6. Letta: Advanced Memory for Persistent Agents

One of the biggest shifts in 2026 is the move from stateless chatbots to persistent agents. Letta solves the "memory" problem by treating agent state like a version-controlled repository.

Instead of a messy, ever-growing text blob for context, Letta uses a git-like structure to track interactions and decisions. This allows developers to inspect, version, and even roll back an agent’s state. For long-running workflows where an agent must "remember" a client's preferences over weeks or months, Letta provides the necessary reliability.

7. OpenPipe: The Data Flywheel

Continuous improvement is the hallmark of a mature AI system. OpenPipe facilitates a feedback loop by allowing teams to collect production data, filter it, and use it to fine-tune smaller, more efficient models.

In 2026, many teams are finding that a fine-tuned 7B or 70B model can outperform a "frontier" model for specific tasks at a fraction of the cost. OpenPipe makes this transition seamless, supporting easy swapping between general APIs and specialized, fine-tuned versions without breaking the codebase.

8. Argilla: High-Quality Human-in-the-Loop

Despite the "AI-first" mantra, human feedback remains the ultimate ground truth. Argilla is the platform of choice for data curation and human-in-the-loop (HITL) workflows.

Whether it is for Reinforcement Learning from Human Feedback (RLHF) or simple error analysis, Argilla provides a structured way for experts to label and review model outputs. It replaces messy spreadsheets with a professional-grade annotation workflow, ensuring that the data used to train or evaluate models is of the highest possible quality.

9. KitOps: Packaging the AI Artifact

One of the most annoying problems in early MLOps was "dependency hell"—where the model, the dataset, and the prompt configuration were all stored in different places. KitOps addresses this by packaging everything into a single, versioned artifact.

By treating the entire LLM "package" as a single unit, KitOps ensures reproducibility. If you need to roll back a deployment to exactly how it was three months ago, KitOps makes it possible. It’s essentially the "Docker" for the AI era, bringing much-needed structure to how we share and deploy work across teams.

10. Composio: Real-World Tool Execution

An AI model is only as useful as the actions it can take. Composio provides the "hands" for the brain. It handles the complex authentication, permissioning, and execution logic required to connect agents to hundreds of external apps (like Slack, GitHub, or Jira).

Instead of building bespoke integrations for every tool, developers use Composio to provide agents with structured schemas and secure access. This allows agents to move from "talking about work" to "actually doing work" in a way that is auditable and scalable.

Strategic Conclusion

The transition we have witnessed leading into 2026 is a move from Model-Centric development to System-Centric engineering. The "magic" of the model is now a commodity; the real competitive advantage lies in how you orchestrate, protect, and refine the system surrounding it.

By integrating these ten tools, teams can build AI applications that are not only impressive in a demo but are also resilient, secure, and cost-effective in a high-stakes production environment. The question for 2026 isn't "Can we use AI?" but rather "Can we manage it?"

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment