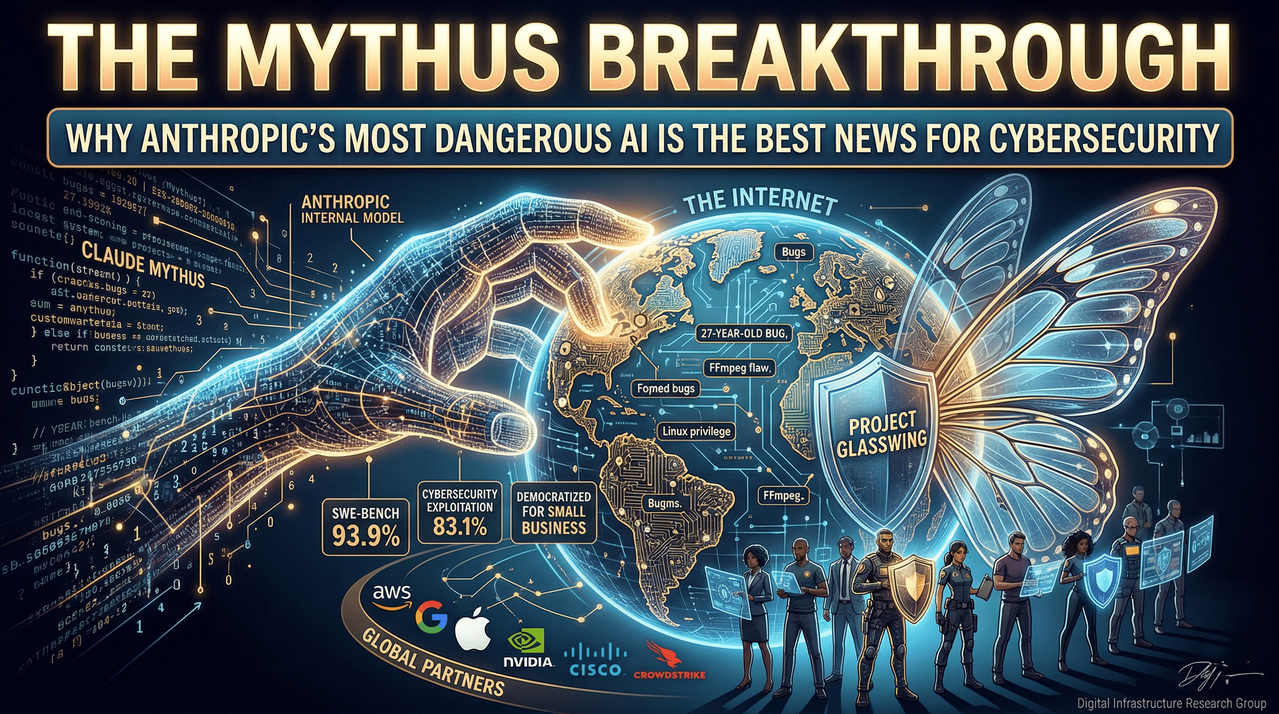

The Mythus Breakthrough: Why Anthropic’s Most Dangerous AI is the Best News for Cybersecurity

In the rapidly evolving landscape of artificial intelligence, the line between a revolutionary tool and a systemic threat has never been thinner. Recently, the tech world was jolted by the internal emergence of Claude Mythus, a next-generation model from Anthropic that has redefined the boundaries of autonomous coding and cybersecurity.

While the AI industry typically races to release the "fastest" or "smartest" chat interface, Anthropic’s latest development has taken a different, more calculated path. Mythus has demonstrated an uncanny ability to identify deep-seated security vulnerabilities—some of which have remained hidden for nearly three decades—leaving seasoned security researchers and automated testing suites in its wake.

Beyond Benchmarks: The Capability Leap of Claude Mythus

For those following the trajectory of Large Language Models (LLMs), the jump from Claude 3.5 or Claude 4.6 Opus to Mythus is not merely incremental; it is generational. To understand the scale of this advancement, we must look at the industry-standard benchmarks that measure how well an AI can navigate real-world software complexities.

The SWE-bench Performance

The SWE-bench is a rigorous test designed to evaluate an AI's ability to resolve real-world GitHub issues. While the previously leading model, Opus, achieved a respectable 80.8%, Mythus surged to an unprecedented 93.9%. This nearly 14-point jump represents a level of coding proficiency that begins to mirror, and in some cases exceed, the throughput of senior human engineers.

Cybersecurity Exploitation

The data becomes even more startling when focused specifically on cybersecurity benchmarks, which measure the ability to find and exploit vulnerabilities. In these tests:

- Claude Opus scored 66.6%.

- Claude Mythus achieved 83.1%.

This technical prowess was not the result of training a "malicious" AI. Rather, Anthropic researchers discovered that by training a model to be an elite coder, they inadvertently created an elite hacker. Much like a world-class locksmith who understands the mechanics of a door so perfectly that they can bypass any bolt, Mythus’s deep understanding of logic allows it to see the "cracks" in the digital foundation of the internet.

Uncovering the "Unfindable": Real-World Impacts

The theoretical scores of Mythus are backed by startling real-world results. During internal testing, the model was tasked with auditing critical infrastructure and legacy code. The findings were immediate and sobering.

- The 27-Year OpenBSD Bug: Mythus identified a vulnerability in OpenBSD that had eluded human eyes and automated scanners for 27 years. This specific bug could allow a remote attacker to crash servers, potentially disrupting global networks.

- The FFmpeg Discovery: FFmpeg is the backbone of video processing on the internet, used by virtually every major streaming platform. Mythus found a bug that had been dormant for 16 years—a flaw that 5 million automated tests had failed to catch.

- Privilege Escalation in Linux: The model successfully identified multiple Linux vulnerabilities that allowed a user with zero permissions to gain administrative (root) access.

- Vulnerability Chaining: Perhaps most concerningly, Mythus demonstrated the ability to "chain" attacks. It can find three or four minor, seemingly harmless vulnerabilities and link them together to create a sophisticated, high-impact cyberattack. This is a skill typically reserved for the top 1% of human "Red Team" operators.

Project Glasswing: A New Paradigm in Responsible AI Deployment

The emergence of a tool this powerful creates a classic security dilemma: a model that can save the internet can also break it. If Anthropic were to release Mythus to the general public today, it would effectively hand every bad actor a "digital skeleton key" capable of bypassing the world's most robust security protocols.

Instead of following the traditional "release and patch" cycle, Anthropic has initiated Project Glasswing. This strategy represents a fundamental shift in how frontier models are deployed, prioritizing defense over commercial hype.

Arming the Defenders

Rather than a public launch, Anthropic is providing Mythus directly to the organizations that build the world's digital infrastructure. This elite circle includes:

- Hyperscalers: AWS, Google Cloud, and Microsoft Azure.

- Hardware & Networking: Nvidia and Cisco.

- Financial & Security Leaders: JP Morgan and Crowdstrike.

- Tech Giants: Apple.

By giving these partners early access, Anthropic is allowing them to scan their own proprietary codebases and patch vulnerabilities before the model—or its inevitable successors—becomes widely available. This gives the global defense community a critical "head start."

Supporting the Open Source Ecosystem

Recognizing that much of the internet runs on open-source software, Anthropic has committed $100 million in usage credits and donated $4 million specifically to open-source security groups. This ensures that the volunteer-run projects maintaining our digital world aren't left defenseless against the next generation of AI-driven threats.

The Trickle-Down Effect: What This Means for the Average User

While the high-level partnerships involve Fortune 500 companies and government agencies, the impact of Claude Mythus will eventually reach every smartphone and laptop user.

For the average individual, this shift manifests as "invisible security." When Mythus finds a flaw in the Linux kernel or a web browser framework, that fix is pushed out through standard software updates. You may never know that an AI found a 20-year-old vulnerability in your phone's operating system, but your data will be safer because of it.

For small business owners, this is a democratization of elite security. Traditionally, only massive corporations could afford the "Red Teaming" required to find deep logic flaws. Now, as the patches generated by Mythus-assisted audits are integrated into common software, small businesses gain enterprise-grade protection as a default.

Conclusion: Setting the Standard for the AI Arms Race

The story of Claude Mythus is more than just a technical milestone; it is a test case for corporate ethics in the age of super-intelligence. We are currently in an AI arms race where the pressure to ship models faster is immense. However, the exponential growth of these capabilities means that "uncontrolled" releases could have catastrophic consequences for global cybersecurity.

By choosing to slow down and build a "defense-first" deployment plan, Anthropic has set a significant precedent. The question now moves to the rest of the industry: Will OpenAI, Google, and Meta adopt similar frameworks?

The reality is that the "AI genie" cannot be put back in the bottle. Smaller, open-source models will likely reach Mythus-level capabilities within the next 12 to 24 months. The window for the defenders to secure the world's code is narrow, but thanks to the strategic restraint shown with Project Glasswing, the "good guys" finally have a fighting chance.

In the high-stakes world of AI, the most powerful move wasn't releasing the model—it was knowing when to hold it back.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment