Anthropic Reopens Claude Agents, But Limits Usage

Anthropic has reversed one of its most controversial Claude subscription restrictions, allowing paid users to connect third-party agent tools such as OpenClaw through the Claude Agent SDK again. For developers, builders, and AI power users, the decision looks at first like a restoration of access. But the details show a much more disciplined pricing strategy: Claude subscriptions are no longer an open-ended gateway for programmatic automation.

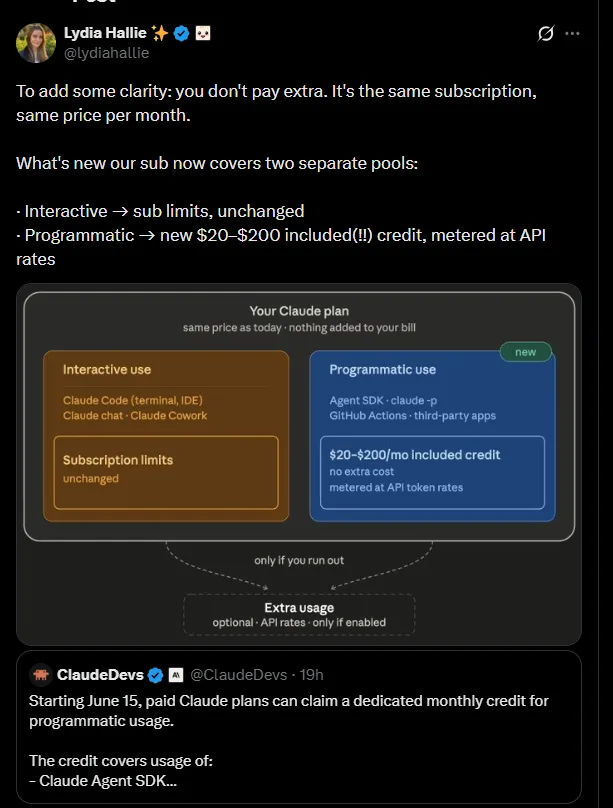

Starting June 15, 2026, eligible Claude paid plans will include a separate monthly Agent SDK credit. This credit applies to programmatic usage, including Claude Agent SDK projects, the claude -p command for non-interactive tasks, Claude Code GitHub Actions, and third-party applications built on the Agent SDK. Anthropic’s own help documentation states that interactive usage in Claude, Claude Code, and Claude Cowork remains separate, while programmatic usage draws from the new credit pool.

The change matters because it redraws the boundary between human-driven AI assistance and autonomous agent workflows. A developer chatting with Claude in the browser or using Claude Code interactively can still rely on the standard subscription experience. But the moment that same user runs a non-interactive command, connects an external agent, or automates tasks through third-party software, the system moves into the new Agent SDK credit model.

According to the details shared in the source material, the dedicated monthly credits are tied to subscription tier: $20 for Pro plans, $100 for Max 5X, $200 for Max 20X, and higher seat-based allocations for certain Team and Enterprise plans. Once those credits are exhausted, programmatic usage stops unless the user enables extra usage billing at standard API rates. Credits also do not roll over, creating a monthly “use it or lose it” structure for developers who depend on agentic workflows.

This is not simply a product update. It is a major signal about the economics of AI agents.

Why Anthropic Changed Course

The conflict around OpenClaw and similar third-party tools began because Claude subscriptions were being used in ways that resembled API-scale automation rather than normal consumer or developer assistance. Earlier in 2026, some users could pay a flat monthly fee for Claude Pro or Max and then route heavy autonomous agent workloads through tools that consumed far more compute than the subscription price could reasonably cover.

That created what many developers called a “compute arbitrage” opportunity. A $20 monthly Claude subscription could, in some workflows, power agent tasks that would have cost far more under normal token-based API billing. From the user’s perspective, this made Claude subscriptions extremely valuable. From Anthropic’s perspective, it introduced a margin and infrastructure problem.

In April 2026, Anthropic moved to block or restrict these workflows, directing users toward API billing or extra usage credits. Reporting at the time described the policy as a major limitation for OpenClaw and similar agent platforms, with Anthropic citing outsized strain from third-party tools and usage patterns that did not match the assumptions behind flat-rate subscriptions.

The new Agent SDK credit system appears to be Anthropic’s compromise. The company is no longer treating third-party agents as a violation by default. Instead, it is allowing them under a clearly measured budget. That gives developers a sanctioned path to use external tools, while also preventing unlimited consumption under a fixed subscription price.

What Changes for Claude Subscribers

For ordinary Claude users, the change may not feel dramatic. If a subscriber mostly uses Claude through the web app, desktop app, mobile app, or interactive Claude Code sessions, the core subscription experience remains largely intact. The new credit system is aimed at programmatic usage, not normal chat.

For builders, however, the change is significant. Workflows using the Claude Agent SDK, automated scripts, GitHub Actions, or third-party agent tools now have a hard monthly meter. Once the dedicated credit is used, the user must either wait until the next billing cycle or enable additional paid usage.

This distinction is important for anyone building around Claude. Interactive assistance is still treated as subscription usage. Automation is treated more like API usage. That means developers must now think about cost, token efficiency, caching, and workflow design in a much more deliberate way.

For small experiments, the monthly credit may be enough. A solo developer testing lightweight scripts or simple agent prototypes could treat the credit as a useful sandbox. But for frequent automation, production workflows, or long-running autonomous agents, the credit may be consumed quickly.

That is why many developers see the policy not as a pure restoration, but as a reclassification. Anthropic is giving access back, but it is also ending the most generous interpretation of what a Claude subscription can do.

Strategic Impact on the Agent Ecosystem

The broader implication is that Anthropic is legitimizing third-party agent platforms while making sure they are economically sustainable. This is a meaningful shift for the agentic AI ecosystem.

OpenClaw, Conductor, and other third-party tools rely on the idea that AI models can act through external interfaces, workflows, command-line systems, and app integrations. These tools are part of a larger movement toward autonomous AI agents that can perform tasks across software environments with minimal human supervision.

By allowing third-party apps to authenticate through the Agent SDK, Anthropic is acknowledging that this workflow is real and important. It is no longer treating external agents as an edge case. At the same time, the company is making clear that autonomous usage cannot be subsidized indefinitely by consumer-style subscription pricing.

This could push the market toward more professionalized agent development. Builders will need to design agents that are efficient, cache-aware, and cost-conscious. Wasteful loops, poorly structured prompts, and unnecessary context expansion will no longer be hidden behind a flat subscription. They will directly burn through monthly credits.

In that sense, Anthropic’s policy may accelerate a more mature phase of AI agent infrastructure. The first phase rewarded experimentation and clever routing. The next phase will reward efficiency, observability, and predictable cost control.

Developer Backlash and Market Risk

The developer reaction has been sharply negative in some circles. Many power users had built their workflows around the assumption that Claude subscriptions could support heavy third-party agent usage. For them, the new policy feels like a downgrade, even if Anthropic presents it as a cleaner and more sustainable structure.

The criticism is especially strong because the new credits are framed as an added benefit, while many users see them as a replacement for broader access they previously enjoyed. That gap between company messaging and user perception is where much of the frustration comes from.

Some developers argue that the change reduces the value of Claude Pro and Max plans for serious builders. Others see it as a sign that Anthropic is under pressure from compute costs and GPU constraints. The core complaint is not that API billing exists; most developers understand that heavy usage costs money. The complaint is that a workflow once treated as part of the subscription is now being separated into a smaller, metered allowance.

This creates a competitive opening for rivals such as OpenAI, Google, and smaller model providers. If developers feel that Claude’s agent economics are becoming less attractive, they may test alternatives. In AI coding and agent workflows, developer loyalty can shift quickly when pricing, reliability, or model performance changes.

Still, Anthropic’s position is understandable from a business standpoint. High-quality AI inference is expensive. Autonomous agents can generate enormous token volumes. If a small number of power users consume far more compute than their plans cover, the model becomes difficult to sustain for everyone else.

What AI Builders Should Do Next

For developers using Claude with OpenClaw or similar tools, the new rule is simple: treat programmatic Claude usage as metered infrastructure. The monthly Agent SDK credit should be seen as a starting allowance, not an unlimited automation budget.

Builders should begin tracking how many tasks their agents run, how much context they send, and how often they repeat expensive operations. They should also compare the cost of using Claude subscriptions plus extra usage against direct API billing. In some cases, the subscription credit may be convenient. In other cases, a dedicated API setup may be more transparent for production use.

Teams should also separate experimentation from production. A Claude subscription may be useful for prototyping agent behavior, testing prompts, and running small automations. But high-volume agent systems should be designed with billing controls, monitoring, and fallback options from the beginning.

The Bottom Line

Anthropic’s restoration of OpenClaw and third-party agent usage is both a concession and a correction. It gives developers back an official path to use Claude subscriptions with external agent tools, but it also closes the door on unlimited programmatic usage under flat-rate pricing.

For casual users, this may be a minor billing detail. For AI builders, it is a major economic shift. The agentic era for Claude subscribers is not ending, but it is becoming metered, budgeted, and more closely aligned with API economics.

Anthropic has protected its margins and reduced the risk of runaway compute usage. But it has also challenged the goodwill of some of its most enthusiastic developer users. Whether that trade-off proves wise will depend on how valuable Claude remains for coding, automation, and agent workflows compared with competing AI platforms.

For now, the message is clear: Claude agents are back, but the free ride is over.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment