Anthropic’s Claude Opus 4.7: A Deep Dive into the New Era of Agentic AI and Cyber Safeguards

The landscape of frontier AI models is shifting from simple chatbots to autonomous "agents"—systems capable of executing complex, multi-step tasks with minimal human intervention. Anthropic, a leader in the space of AI safety and capability, has officially signaled its next major move in this direction with the general availability of Claude Opus 4.7.

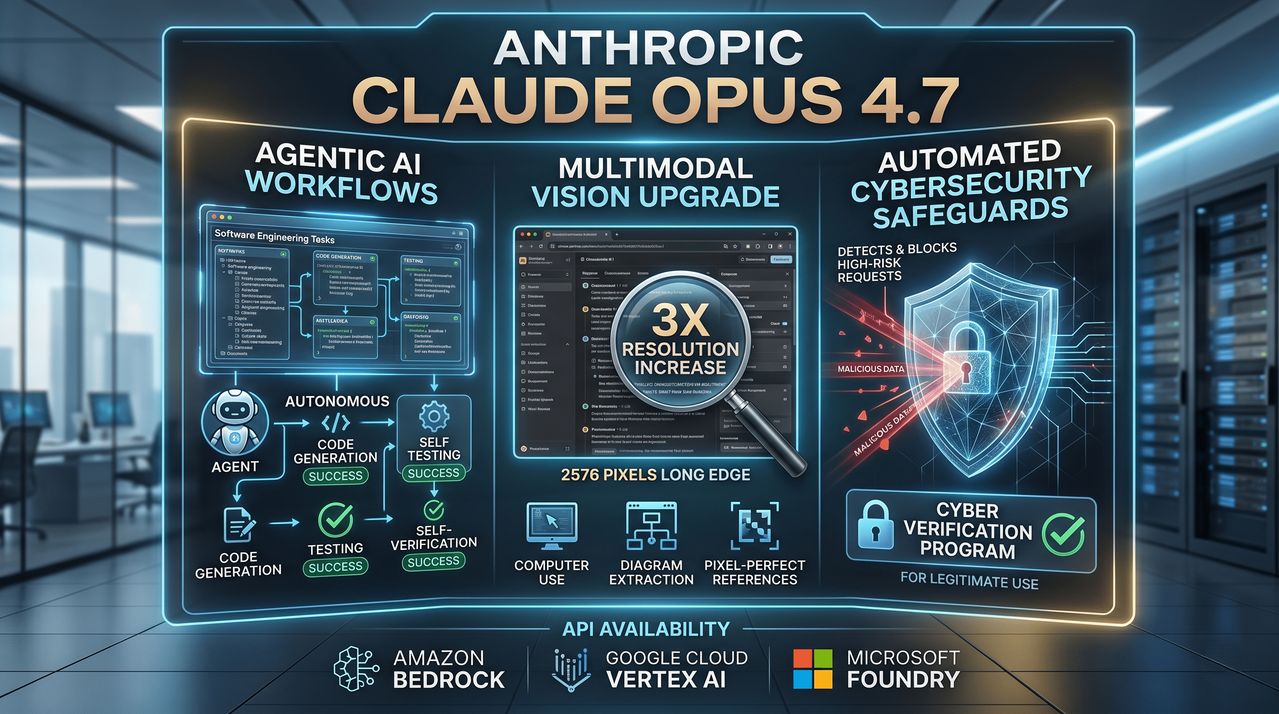

This release isn't just a minor incremental update; it is a strategic deployment aimed at software engineering teams and enterprise developers who are pushing the boundaries of unsupervised AI workflows. With significant upgrades to vision, instruction fidelity, and a groundbreaking approach to cybersecurity safeguards, Opus 4.7 sets a new benchmark for what we can expect from high-reasoning models in 2026.

The Shift Toward Agentic Autonomy

For months, the AI industry has been buzzing about "agentic workflows." These are setups where an AI doesn't just answer a prompt but actively works through a file system, writes code, tests it, and iterates until a goal is achieved. Anthropic’s Opus 4.7 is designed specifically for this high-stakes environment.

Compared to its predecessor, Opus 4.6, the new 4.7 iteration displays a marked improvement in advanced software engineering. In internal testing and early deployments, the model has shown a newfound rigor when tackling long-running tasks. Instead of simply providing a "best guess," Opus 4.7 is now more likely to devise internal verification steps—checking its own work before reporting a final result. For developers, this reduces the "babysitting" time usually required when using AI for coding, allowing the model to function more like a junior developer and less like a glorified autocomplete.

Availability and Market Integration

Anthropic is ensuring that Opus 4.7 is accessible exactly where developers need it. The model is currently available via:

- Claude.ai (Pro and Team plans)

- The Anthropic API

- Amazon Bedrock

- Google Cloud’s Vertex AI

- Microsoft Foundry

Crucially for enterprise budgeting, the pricing remains consistent with the previous version: $15 per million input tokens and $75 per million output tokens. While not the cheapest model on the market, the ROI is found in its high-reasoning capabilities and reduced error rates.

Pixel-Perfect Vision: A Leap in Multimodal Processing

One of the most tangible upgrades in Opus 4.7 is its visual acuity. As AI agents are increasingly tasked with "Computer Use"—the ability to navigate a screen like a human—the resolution of their "eyes" matters.

Opus 4.7 can now accept images up to 2,576 pixels on the long edge, totaling approximately 3.75 megapixels. This is a three-fold increase over prior Claude models. Why does this matter?

- Dense Screenshots: Agents can now read small text in complex software GUIs.

- Complex Diagrams: Data extraction from architectural blueprints or intricate flowcharts is significantly more accurate.

- Pixel-Perfect References: For UI/UX designers, the model can now spot minute details that were previously blurred or compressed.

While the higher resolution is a model-level change that happens automatically at the API level, Anthropic has thoughtfully allowed for downsampling. Users who don't require high-fidelity detail can still shrink their images before sending them to the API to keep token costs under control.

Instruction Fidelity: The "Literal" Model

A fascinating shift in Opus 4.7’s behavior is how it interprets human language. While older models often tried to "read between the lines"—sometimes skipping steps they deemed redundant—Opus 4.7 has been tuned for extreme instruction fidelity.

It takes instructions literally. If you tell the model to follow a specific 10-step process, it will no longer take shortcuts. This is a double-edged sword for developers. While it leads to much more predictable behavior in autonomous agents, it also means that existing prompt libraries may need to be re-tuned. If a prompt was "loose" or relied on the model's intuition to fill gaps, Opus 4.7 might follow the literal (and perhaps flawed) text to the letter.

Furthermore, the model now features improved file system-based memory. It can retain and utilize important notes across long, multi-session work. This persistent context allows it to move from Task A to Task B without needing a massive "context dump" at the start of every new session, effectively making it more efficient over long project lifecycles.

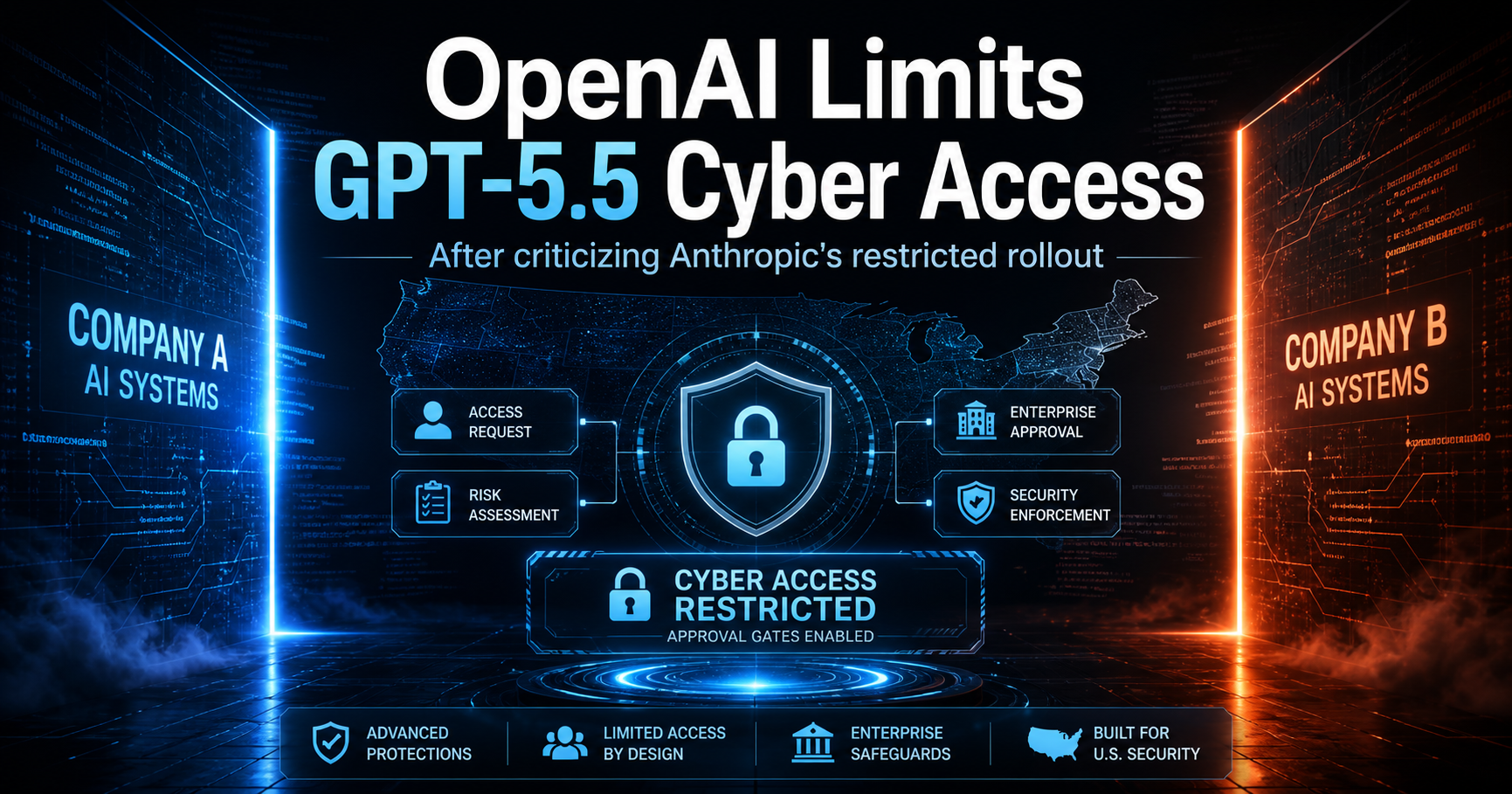

Redefining Security: The Cyber Verification Program

Perhaps the most significant policy change accompanying this release is the introduction of automated cybersecurity safeguards. Anthropic has long been vocal about the risks AI poses to global security, specifically regarding the creation of malware or the identification of zero-day vulnerabilities.

Opus 4.7 is the "guinea pig" for a new era of safety. It is the first model to ship with safeguards that automatically detect and block requests indicating high-risk cybersecurity uses. This is part of a larger strategy to prepare for the upcoming "Mythos-class" models—the next generation of hyper-capable AI.

Navigating the Ethics of "Cyber"

Anthropic faces a delicate balance: how do you stop bad actors from using AI for hacking while allowing security professionals to use it for defense? Their answer is the Cyber Verification Program.

- The Guardrails: For the general public, Opus 4.7 will refuse requests that look like malicious hacking attempts.

- The Program: Security researchers, penetration testers, and "red-teamers" can apply to join a verified program. Once vetted, these professionals can use the model’s advanced reasoning for legitimate vulnerability research and defense-building.

This structured access model suggests that Anthropic is moving away from a "one-size-fits-all" safety filter and toward a more nuanced, identity-based permission system for high-risk capabilities.

Safety and Alignment: A Candid Assessment

Anthropic’s internal "System Card" for Opus 4.7 provides a transparent look at the model's psyche. Generally, it shows a similar safety profile to the 4.6 version, with low rates of "sycophancy" (the tendency to tell the user what they want to hear) and deception.

However, the report isn't all praise. Anthropic notes that while the model is improved in honesty and resistance to prompt injection (jailbreaking), it is "modestly weaker" in some areas. Specifically, it has a tendency to provide overly detailed advice on harm reduction regarding controlled substances—a reminder that "alignment" is an ongoing, imperfect process. Anthropic’s own conclusion is refreshingly honest: the model is "largely well-aligned and trustworthy, though not fully ideal in its behavior."

Technical Migration: What Developers Need to Know

For teams planning to migrate from Opus 4.6 to 4.7, there are two critical technical shifts to account for:

1. Tokenization Efficiency

Opus 4.7 uses an updated tokenizer. This means the same piece of text might result in 1.0 to 1.35 times more tokens than before. While this sounds like a price hike, Anthropic claims that because the model is more efficient at solving problems (especially in coding), it actually uses fewer tokens overall to reach a successful result.

2. "Thinking" and Output Volume

The model now engages in more "internal thought," particularly during later turns in an agentic sequence. This leads to more output tokens as the model "reasons out loud" to ensure reliability. In coding evaluations, this extra "thought" has been proven to significantly improve the success rate on hard problems, justifying the extra token expenditure.

Final Thoughts: The Human Perspective

As a writer who has followed the evolution of the Claude series, what stands out about Opus 4.7 isn't just the raw power—it’s the predictability. We are moving out of the "magic trick" phase of AI, where we are surprised when the model gets something right. We are entering the "utility" phase, where we expect the model to act as a reliable, literal, and secure component of a larger machine.

Anthropic is clearly positioning itself as the "adult in the room," focusing on safeguards and literal instruction following over the flashy, often erratic behavior of its competitors. For the software engineering world, Opus 4.7 isn't just a new version; it's a more stable foundation for the autonomous future.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment