Akamai Surges on Major LLM Deal as Cloudflare Restructures for the AI Era

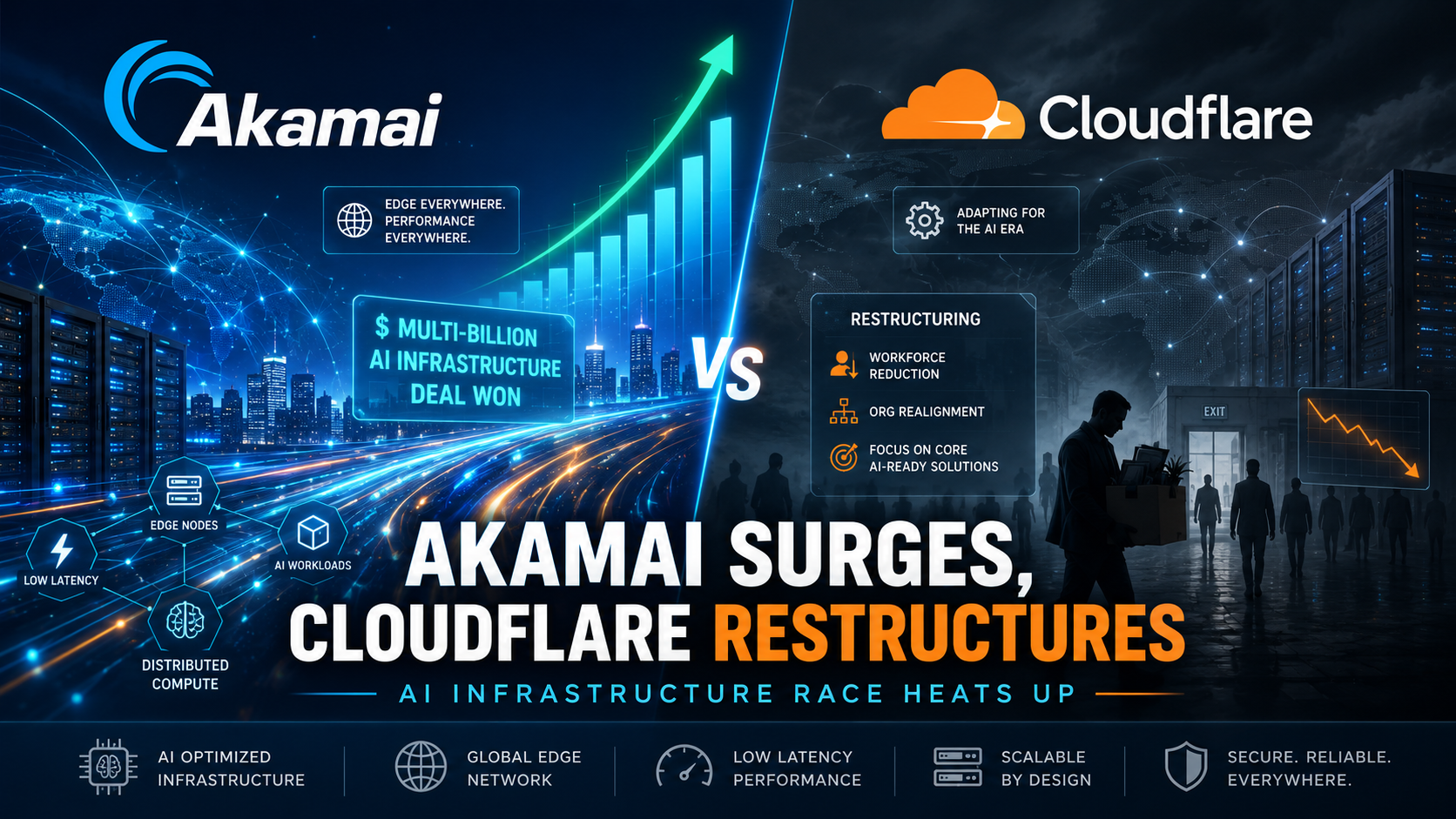

The artificial intelligence infrastructure race is reshaping the cloud, content delivery, and edge computing markets faster than many investors expected. This week, two major internet infrastructure companies, Akamai and Cloudflare, delivered sharply different signals to the market. Akamai gained momentum after announcing a historic seven-year, $1.8 billion agreement with a leading large language model provider, while Cloudflare faced pressure after revealing a major workforce reduction tied to its shift toward the agentic AI era.

The contrast was striking. Akamai’s announcement highlighted the growing demand for distributed cloud infrastructure capable of supporting AI workloads at scale. Cloudflare’s update, meanwhile, showed how even fast-growing technology companies are reorganizing aggressively to adapt to artificial intelligence. Together, the two stories offer a clear view of how AI is no longer just a software trend. It is becoming a major force in cloud architecture, data center economics, enterprise strategy, and public market performance.

Akamai Wins Its Largest Deal Ever

Akamai, long known as a major player in content delivery networks and edge infrastructure, announced a seven-year, $1.8 billion deal with a leading LLM provider. Bloomberg identified the customer as Anthropic, one of the most prominent companies in the frontier AI model market. Akamai CEO Tom Leighton described the agreement as the largest deal in the company’s history.

The announcement came after another major deal in the previous quarter, when an unidentified frontier-model developer reportedly signed a $200 million agreement with Akamai. These contracts suggest that Akamai is becoming more than a traditional CDN provider. It is positioning itself as an AI infrastructure partner for companies that need scalable, low-latency, distributed compute capacity.

During Akamai’s first quarter earnings call, Leighton explained that AI leaders are choosing Akamai because their workloads require scale, performance, and reliability. This is especially important for large language model inference, where speed, availability, and geographic reach can directly affect user experience and operating costs.

Akamai’s global footprint is one of its strongest advantages. The company operates across 4,300 locations in 700 cities and 130 countries. That distributed network gives Akamai the ability to place compute resources closer to users and enterprise customers. In the AI era, that edge advantage may become increasingly valuable.

Why Distributed AI Infrastructure Matters

The AI boom has created intense demand for compute power. Training large models requires massive centralized data centers, advanced GPUs, high-bandwidth networking, and major energy resources. However, once models are deployed, inference becomes a different challenge. AI applications must respond quickly, reliably, and often from many geographic locations.

This is where distributed infrastructure can play a major role. Companies building AI assistants, coding tools, enterprise agents, search systems, and real-time automation platforms need more than raw compute. They need infrastructure that can serve millions of requests with low latency and high reliability.

Akamai’s strategy appears to be focused on this opportunity. Instead of competing only as a traditional cloud provider, the company is emphasizing distributed inference and edge compute. Its pitch is that AI workloads will not all run from a few centralized hyperscale regions. Many enterprise and consumer AI services will benefit from being closer to users, closer to data, and closer to real-time network traffic.

Leighton said this has been Akamai’s strategy all along. The company has been building toward a distributed inference platform and a distributed compute platform that can support enterprises across many industries. The latest $1.8 billion deal is evidence that this strategy is beginning to produce major commercial results.

Akamai Beats Hyperscalers and Neoclouds

One of the most notable parts of the deal is that Akamai reportedly won it against tough competition from hyperscalers and neocloud providers. Hyperscalers such as Amazon Web Services, Microsoft Azure, and Google Cloud dominate traditional cloud infrastructure. Neocloud companies, meanwhile, have grown rapidly by offering GPU-heavy infrastructure designed specifically for AI companies.

For Akamai to win a major LLM infrastructure contract in this competitive environment is significant. It suggests that some AI companies are looking beyond centralized cloud capacity. They may be searching for more flexible infrastructure models that combine global reach, reliability, and predictable long-term capacity.

Akamai’s ability to manage complex distributed systems likely played a key role. Large-scale AI services require not only compute but also orchestration, security, routing, traffic management, and resilience. These are areas where Akamai has decades of experience.

The company’s low-latency network may also have helped secure the agreement. In many AI products, even small delays can damage the user experience. As AI becomes more interactive, conversational, and agentic, fast response times will become a core infrastructure requirement.

Capital Expenditure and Supply Chain Questions

The size of the deal naturally raised questions about whether Akamai would need to increase capital expenditures. AI infrastructure has become expensive, especially because of supply chain constraints in data center space, memory components, GPU availability, and power infrastructure.

Akamai Executive Vice President and CFO Ed McGowan said a major increase in capital expenditure was not expected. He explained that Akamai had already prepared its supply chain and expects to receive the goods needed to deliver services under the contract within the next 12 months.

McGowan also noted that the company has contract mechanisms to handle potential future price increases. This is important because AI infrastructure costs can change quickly. Memory pricing, hardware availability, and data center capacity may all fluctuate as demand rises.

The deal is consumption-based over seven years. That means Akamai will begin recognizing revenue as it ramps the necessary capacity and the customer begins using the service. McGowan said revenue from the contract is expected to begin later this year.

For investors, this structure is important. It means the deal may not immediately appear as full revenue, but it gives Akamai a long-term growth opportunity backed by a major AI customer. It also gives the company a stronger narrative in the AI infrastructure market.

Cloudflare Announces Major Workforce Cuts

While Akamai was celebrating a historic AI infrastructure win, Cloudflare was delivering difficult news to employees. The company announced plans to cut approximately 1,100 jobs, equal to around 20 percent of its workforce.

Cloudflare co-founders Matthew Prince and Michelle Zatlyn said the layoffs were not primarily about cutting costs. Instead, they described the move as part of a broader effort to rebuild the company for the agentic AI era. In their message, they said Cloudflare must be intentional about how it architects the company to deliver more value to customers and continue its mission of helping build a better internet.

This language reflects a wider trend across the technology industry. Many companies are restructuring teams, changing hiring priorities, and reallocating budgets toward AI products, automation, developer platforms, and infrastructure. However, workforce cuts of this size remain painful and controversial, especially when companies are still growing revenue.

Cloudflare reported first quarter revenue of $639.8 million, up 34 percent year over year. Despite that growth, the company posted a net loss of $22.9 million. It also expects to pay up to $150 million in severance and benefit payments related to the layoffs.

Market Reaction: Akamai Up, Cloudflare Down

Investors reacted sharply to the two announcements. Akamai’s stock price surged 26 percent on Friday, reflecting optimism around the $1.8 billion LLM deal and the company’s growing AI infrastructure opportunity. Cloudflare’s stock dropped 23 percent, as investors weighed the implications of its restructuring, severance costs, and AI-era repositioning.

Still, Cloudflare remains a much larger company by market capitalization. With a market cap of more than $69 billion, Cloudflare is still valued at more than three times Akamai’s market cap. This shows that the market continues to see significant long-term potential in Cloudflare, even after the negative reaction.

The difference is that Akamai gave investors a concrete AI infrastructure contract with long-term revenue potential. Cloudflare gave investors a restructuring story. Both may be important, but the market clearly rewarded the company with a visible AI deal and punished the company announcing layoffs.

What This Means for the AI Cloud Market

The Akamai and Cloudflare stories show how artificial intelligence is changing the competitive landscape for internet infrastructure. AI companies need compute, but they also need scale, latency optimization, security, reliability, and global distribution. This creates opportunities for companies that were not always seen as central players in the AI boom.

Akamai’s deal suggests that distributed cloud and edge platforms may become increasingly important for AI inference. If AI agents, chatbots, enterprise copilots, and real-time automation systems become more common, the demand for low-latency infrastructure could grow significantly.

Cloudflare’s restructuring shows another side of the same trend. Companies that want to remain competitive in the AI era may need to redesign their organizations, products, and operating models. For Cloudflare, the challenge is to convince investors, customers, and employees that its AI-focused restructuring will produce stronger long-term growth.

Conclusion

Akamai’s historic $1.8 billion LLM deal and Cloudflare’s major workforce reduction mark a turning point in the AI infrastructure race. Akamai is gaining attention as a serious distributed AI compute provider, while Cloudflare is reorganizing to align itself with the future of agentic AI.

The market response was immediate and dramatic. Akamai surged as investors saw direct evidence of AI-driven demand. Cloudflare declined as investors reacted to layoffs, restructuring costs, and uncertainty around execution.

The bigger lesson is clear: artificial intelligence is no longer only about models and applications. It is also about the infrastructure that makes those models usable at global scale. Companies that can deliver reliable, low-latency, distributed compute may become essential players in the next phase of AI growth.

For Akamai, this week was a major validation of its AI strategy. For Cloudflare, it was a difficult but potentially strategic reset. For the broader technology sector, it was another reminder that the AI era is rewriting the rules of cloud competition, workforce planning, and investor expectations.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment