The Great Reversal: How Automated AI Discovery is Ending the Era of the Attacker’s Advantage

For decades, the fundamental math of cybersecurity has been stubbornly lopsided. The "Defender’s Dilemma" dictated that an enterprise had to protect every single entry point perfectly, while an attacker only had to find one single flaw. This imbalance created an operational doctrine where total security was viewed as an expensive myth. Instead, firms aimed for "defensive depth"—essentially making an attack so costly and time-consuming that only nation-states or elite criminal syndicates would bother.

However, we are currently witnessing a seismic shift in this landscape. The emergence of automated AI vulnerability discovery is not just shifting the needle; it is fundamentally reversing the cost-benefit analysis that has long favoured malicious actors.

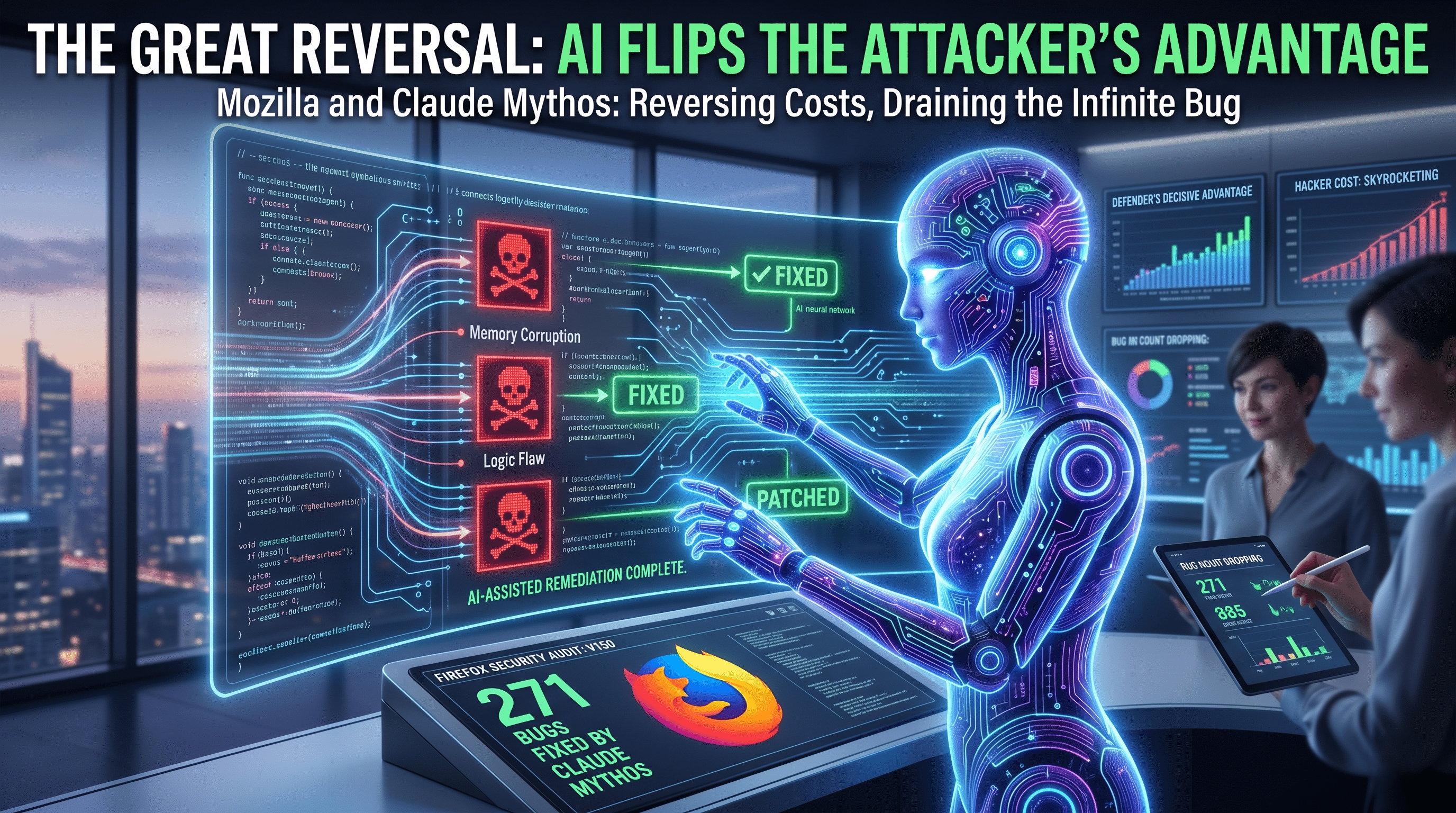

The Firefox Benchmark: A New Standard for Digital Fortification

The most compelling evidence for this shift comes from the recent collaboration between the Mozilla Firefox engineering team and Anthropic. In what is being hailed as a landmark evaluation, the Firefox team utilized Claude Mythos Preview to audit their latest software iterations.

The results were staggering. During the preparation for the version 150 release, the team identified and remediated 271 vulnerabilities. This followed a successful integration of Opus 4.6 for version 148, which had already flushed out 22 high-priority security fixes.

For perspective, uncovering nearly 300 vulnerabilities in a single release cycle would traditionally require thousands of man-hours from a dedicated "Red Team" of elite researchers. By leveraging automated reasoning, Mozilla managed to condense months of human labor into a streamlined, high-output audit. This isn't just an incremental improvement; it is the industrialization of vulnerability discovery.

Beyond Fuzzing: The Rise of Automated Reasoning

To understand why this is a breakthrough, we must look at traditional testing methods. For years, fuzzing—the process of feeding a program massive amounts of random data to see where it breaks—has been the gold standard for automated testing. While effective, fuzzing is a "brute force" method. It struggles with deep logic flaws or complex state machines within a codebase.

To catch the most sophisticated bugs, firms have always relied on elite human researchers who can "reason" through source code, understanding the developer's intent and finding where the logic fails. This expertise is both incredibly rare and prohibitively expensive.

The deployment of Claude Mythos Preview marks the point where AI has achieved parity with these elite human researchers. The Firefox team noted a startling realization: there was no category of flaw or level of complexity that a human could identify which the model could not also grasp. The "human discovery constraint" has effectively been removed. Computers, which were incapable of this level of abstract reasoning just eighteen months ago, are now operating at a level that equals the world’s top security minds.

Navigating the Costs of Frontier AI Integration

While the benefits are clear, the transition to an AI-augmented security posture is not without its hurdles. Integrating frontier models into a Continuous Integration/Continuous Deployment (CI/CD) pipeline requires significant capital expenditure.

1. The Compute Tax

Running millions of tokens of proprietary code through a model like Mythos Preview is a heavy lift. Enterprises are no longer just paying for software licenses; they are financing massive compute cycles. To make this viable, technology leaders must establish secure vector database environments. These environments allow the AI to maintain the necessary "context window" to understand a vast, interconnected codebase without leaking proprietary corporate logic to the public cloud.

2. The Hallucination Guardrail

An AI that generates "false positives"—reporting vulnerabilities that don't actually exist—is a liability. It wastes the time of expensive senior engineers who must verify every claim. Therefore, a modern security pipeline must be multi-layered. Model outputs must be cross-referenced against traditional static analysis tools and real-world fuzzing results. Only when the AI’s reasoning is validated by these "sanity checks" does it move to a human's desk for remediation.

The Legacy Code Lifeline: C++ vs. Rust

In recent years, the industry consensus has been that the only way to achieve true security is to migrate to memory-safe languages like Rust. While this is a noble goal, it is financially and operationally impossible for most established enterprises. Organizations are sitting on decades of legacy C++ code that runs the world’s most critical infrastructure.

Replacing this code entirely would cost billions and take years, if not decades. Automated AI reasoning offers a "third way." Instead of a total system overhaul, firms can use AI to conduct exhaustive audits of legacy code, patching holes that were previously invisible. This allows businesses to secure their existing assets without the "staggering expense" of a complete rewrite, providing a massive ROI for security budgets.

From Best Practice to Corporate Negligence

The regulatory climate is shifting alongside the technology. In an era where a single data breach can lead to hundreds of millions of dollars in fines and lost market cap, "we did our best" is no longer a valid legal defense.

As these AI tools become more accessible, the baseline for "reasonable care" in software development is being redefined. If a model like Claude Mythos can reliably find a logic flaw in a matter of seconds, failing to utilize such a tool could soon be classified as corporate negligence. We are moving toward a future where automated audits are not a luxury for tech giants like Mozilla, but a standard requirement for any firm handling sensitive data.

Conclusion: The End of the Infinite Bug

Perhaps the most encouraging takeaway from the Firefox evaluation is the realization that software defects are finite.

While the initial wave of AI-discovered vulnerabilities can feel overwhelming—a "terrifying" influx of data, as some researchers put it—it represents the draining of a swamp. Applications like Firefox, while complex, are modular and designed with human logic. They are not arbitrarily complex. By committing to the remediation work now, security teams can finally move from a reactive "whack-a-mole" strategy to a proactive, decisive advantage.

The industry is entering a "clean-up" phase. Once the backlog of historical vulnerabilities is cleared through automated discovery, the cost of maintaining a secure environment will drop significantly. For the first time in the history of the internet, the shield is becoming stronger—and cheaper—than the sword.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment