5 Verticals AI Can’t Replace: The Future of the Web in 2026

The current landscape of software development is undergoing a seismic shift. As we navigate through 2026, the "build layer"—the once-guarded territory of writing and deploying code—is effectively collapsing. With AI app builders like Lovable reaching $300 million in ARR and generating over 100,000 new projects daily, the barrier to entry for creating software has vanished.

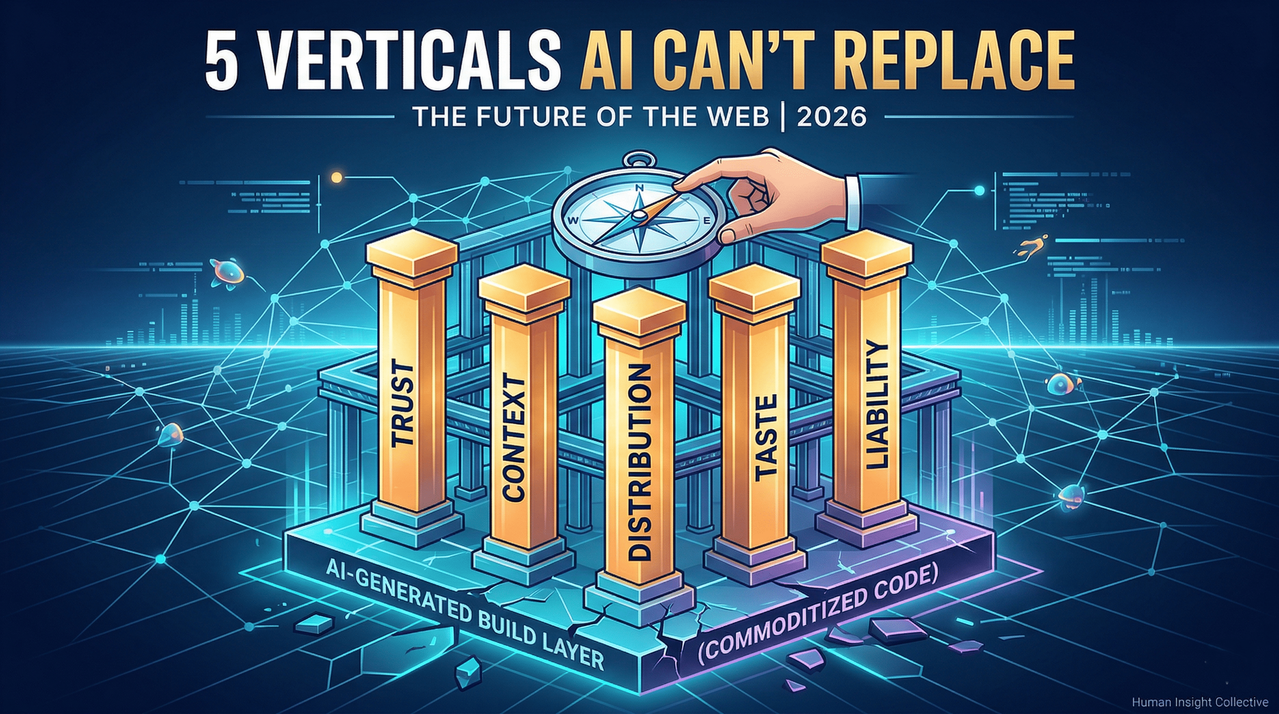

However, a critical question remains for founders and developers: if anyone can generate an app in seconds, what remains worth building? The answer lies in five durable verticals that AI, by its very nature, cannot replicate. These pillars are the foundation of the emerging agentic economy.

The Collapse of the Build Layer

The frenzy surrounding platforms like Lovable, Replit, and Bolt suggests a gold rush, but a strategic look beneath the surface reveals a "middleware trap." Most of these tools are functionally thin wrappers around base models like Claude, GPT-4, or Gemini. Their "moat" is only as deep as the time it takes to replicate a UI—which, in the age of AI-assisted coding, is roughly a week.

The survivors of this era aren't those who train better models; they are the companies that own something structural.

- Replit survives because it owns the runtime—the environment where code actually lives.

- Vercel survives because it owns the infrastructure that hosts production apps for the world’s biggest brands.

- Notion survives because it owns the knowledge graph of 100 million users.

For the "little guys" building on the web, the goal is to find a niche that the model makers (OpenAI, Anthropic, Google) won't simply absorb in their next update.

The Five Verticals of Value

To build a durable business in 2026, you must align with one of the following five verticals. These are not product categories; they are layers of value that persist regardless of how intelligent LLMs become.

1. The Trust Layer (Verification and Accountability)

The web is currently being flooded with AI-generated content, storefronts, and services. Many are indistinguishable from one another, and many are actively malicious. When a professional-looking checkout page can be generated in three seconds, "looking legitimate" no longer equals "being serious."

The companies that become the verification layer will capture immense value. This is why Stripe and Shopify are stronger than ever. They aren't just technical features; they are trust signals. In an agentic economy—where your AI agent autonomously books flights or signs contracts—the trust layer is the only thing standing between you and a universe of AI-generated scams. If an agent cannot verify a service, it won't transact with it.

2. The Context Layer (Data Gravity)

AI is a general-purpose tool. To be useful, it requires specific, unique data: your company’s internal records, your medical history, or your meeting notes from last Tuesday.

Companies like Salesforce, Epic, and Snowflake own "data gravity." An agent without context is just a chatbot, but an agent with your specific context becomes a dependable junior employee. The authoritative stores of context—and the permissioning layers that govern them—act as the choke points of the modern internet.

3. The Distribution Layer (Curation as Scarcity)

The movie Field of Dreams lied: "If you build it, they will come" does not apply to the web. In a world of infinite supply, curation becomes the scarcest resource.

Distribution monopolies like Google, YouTube, and the Apple App Store become more powerful as the noise increases. For the agentic economy, a new problem has emerged: Agent Discovery. We are seeing the rise of agent-native discovery mechanisms—essentially app stores for agents—where the goal is to help AI entities find "agent-friendly" businesses to transact with.

4. The Taste Layer (Design and Editorial Judgment)

"Taste" is an ambiguous term, but in 2026, it is a separate vertical of value. When production is free, what you choose to produce is the entire game.

Think of the music industry. After tools like Suno made AI music generation instant, the producers who thrived weren't the ones with the best equipment; they were the ones with the "ear" for what resonates with a human audience. Similarly, the "vibe coder" who ships an app in minutes hasn't done the hard part. The hard part is the design sensibility and the conviction about what should exist in the world. AI can assist, but it cannot replace the human accountability for the final "vibe."

5. The Liability Layer (The Governance Gatekeepers)

This is the least "fun" but perhaps most lucrative vertical. Someone must be on the hook when things go wrong.

- If an AI-generated medical app gives fatal advice, who is liable?

- If an AI-drafted contract contains a disastrous loophole, who pays?

Regulated industries like healthcare, finance, and law are built on the core notion of accountability. Professionals in these spaces don't just sell expertise; they sell a guarantee. As AI becomes more plausible-sounding, the potential for serious, high-stakes mistakes grows. The companies that position themselves as liability guarantors or "AI assurance" providers own the governance layer of the future web.

Strategic Implications for Founders

As we look toward the remainder of 2026, the map of the web is being redrawn.

- Model Providers (OpenAI, Anthropic) own the bedrock intelligence.

- Infrastructure Players (Vercel, Stripe) own the execution and trust.

- Context Owners (Notion, Salesforce) own the data.

- Humans own the taste, the judgment, and the accountability.

If you are currently building a product, you must ask yourself one question: What do I own that still matters if AI gets 10 times better?

If a smarter model makes your product obsolete, you are standing on quicksand. However, if a smarter model makes your product more valuable—because you provide the trust, context, or distribution that the model needs to be effective—then you have a durable business.

The bottleneck of the future isn't how fast you can build; it’s how well you can distribute, how deeply you understand your user's context, and how much trust you can command in a world of infinite noise.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment