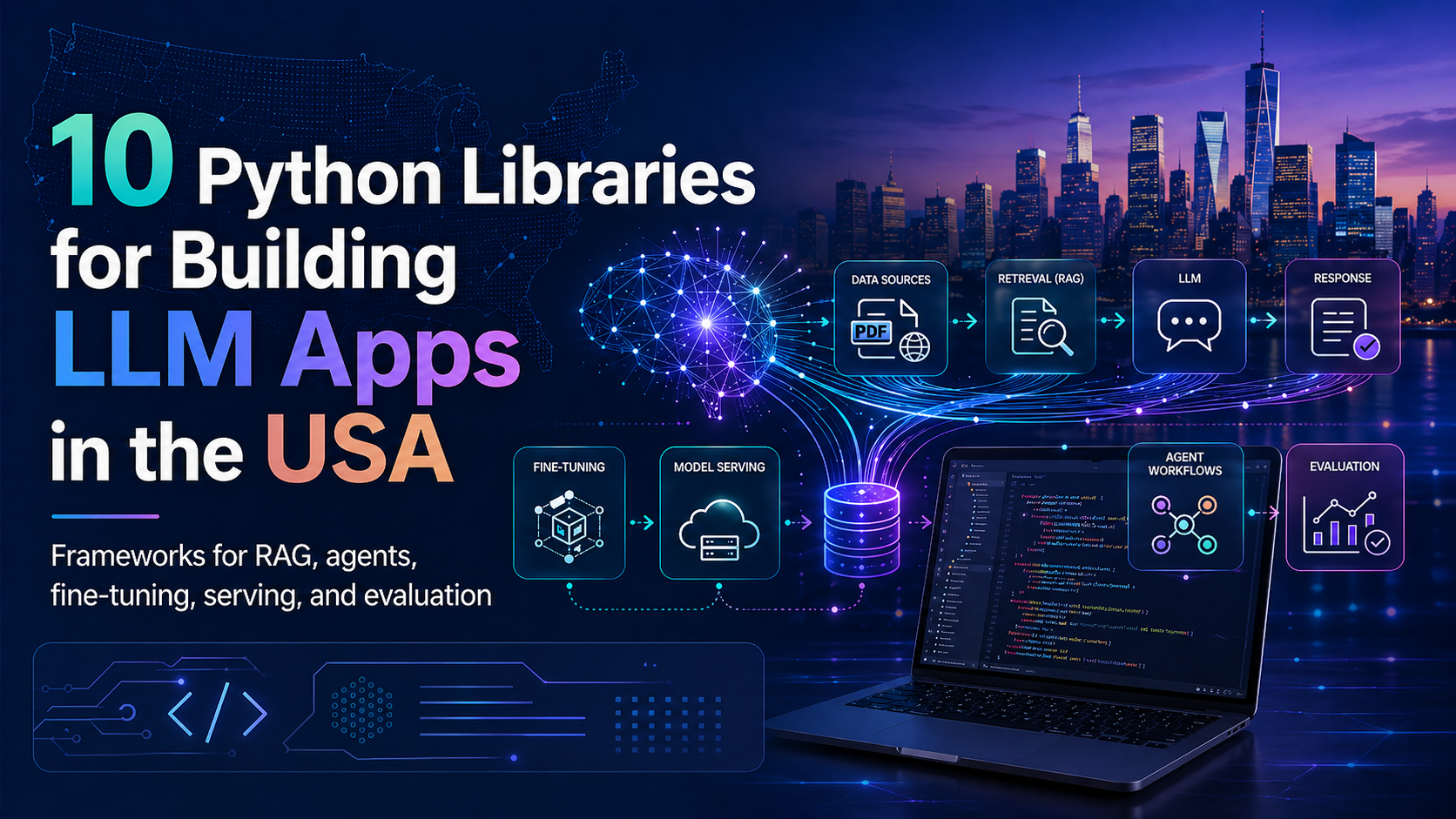

10 Python Libraries for Building Powerful LLM Applications in 2026

Large Language Models are no longer just experimental tools used by researchers or tech enthusiasts. Across the United States, companies, startups, software engineers, data teams, and independent developers are building real-world applications powered by LLMs. From customer support assistants and internal knowledge bases to AI agents, code helpers, workflow automation tools, and enterprise search platforms, LLM applications are becoming part of everyday software development.

However, building an LLM application is very different from simply using ChatGPT, Claude, or other consumer-facing AI tools. Those platforms are designed for end users who want quick answers or productivity assistance. Developers, on the other hand, need more control. They need to manage model loading, retrieval pipelines, vector databases, API serving, fine-tuning, monitoring, evaluation, and multi-agent workflows.

This is where Python becomes especially important. Python remains one of the most widely used programming languages for artificial intelligence, machine learning, and backend development. Its ecosystem gives developers access to powerful libraries that simplify the process of building, testing, deploying, and improving LLM-powered applications.

Whether you are a developer in Silicon Valley, a data scientist in New York, a startup founder in Austin, or a software engineer working remotely anywhere in the U.S., choosing the right Python libraries can save time, reduce infrastructure complexity, and help you build more reliable AI systems.

Below are 10 Python libraries and frameworks that are especially useful for building modern LLM applications.

1. Transformers

Transformers is one of the most important Python libraries in the open-source AI ecosystem. Developed by Hugging Face, it gives developers a consistent way to load, run, fine-tune, and deploy many types of language models.

For many teams, Transformers is the starting point when working with open-source LLMs. It supports a wide range of model families and allows developers to handle tokenization, text generation, classification, summarization, embeddings, and fine-tuning through a unified interface.

One of the biggest advantages of Transformers is flexibility. Instead of building every model pipeline from scratch, developers can use prebuilt tools and adapt them to their own projects. This is especially valuable for U.S.-based AI startups and enterprise teams that need to test several models before deciding which one fits their product.

Transformers is useful for research prototypes, production applications, custom model experiments, and fine-tuning workflows. If you want to work directly with open-source models, this library is one of the most important tools to learn.

2. LangChain

LangChain is one of the best-known frameworks for building LLM applications that go beyond a single prompt and response. Real LLM apps often need to connect multiple components, such as prompts, tools, APIs, retrievers, databases, and memory systems. LangChain helps organize those pieces into structured workflows.

This makes it useful for building chatbots, retrieval-augmented generation systems, AI assistants, document analysis tools, and agent-based applications. Instead of manually wiring every step together, developers can use LangChain to create chains, connect tools, manage prompts, and structure complex logic.

For businesses in the United States that want to build AI-powered customer service systems, internal productivity tools, or automated research assistants, LangChain can provide a strong foundation. It helps move an application from a simple demo to a more practical software product.

3. LlamaIndex

LlamaIndex is especially valuable for building applications that need to connect LLMs with private or external data. Many useful AI applications cannot rely only on what a model already knows. They need to search through company documents, PDFs, databases, spreadsheets, websites, or internal knowledge bases before generating an answer.

This is where retrieval-augmented generation, often called RAG, becomes important. LlamaIndex helps developers build RAG pipelines by indexing data, retrieving relevant information, and passing that context to the language model.

For American companies working with legal documents, healthcare records, financial reports, support tickets, technical documentation, or internal training material, LlamaIndex can be extremely useful. It helps make LLM responses more grounded, relevant, and connected to real business data.

4. vLLM

vLLM is a powerful library for serving open-source language models efficiently. Building an LLM application is not only about selecting a model. Developers also need to serve that model quickly, handle multiple requests, reduce latency, and use GPU memory efficiently.

vLLM is designed for high-throughput inference. It helps teams deploy open-source models in a way that is faster and more practical for production environments. This matters because many LLM applications fail to scale when they move from prototype to real users.

For companies in the U.S. that want to reduce dependency on external APIs or run open-source models on their own infrastructure, vLLM is a strong option. It is especially useful for AI platforms, SaaS products, internal enterprise tools, and applications that need fast response times.

5. Unsloth

Unsloth has become popular because it makes fine-tuning more accessible. Fine-tuning allows developers to adapt a model to a specific task, writing style, industry, dataset, or business use case. However, traditional fine-tuning can be expensive and hardware-intensive.

Unsloth focuses on efficient fine-tuning methods such as LoRA and QLoRA. These techniques help reduce memory usage and training cost while still allowing developers to customize powerful models.

This is useful for smaller teams, independent developers, AI consultants, and startups that do not have massive GPU budgets. A U.S.-based startup building a legal assistant, healthcare chatbot, real estate automation tool, or finance-focused AI product could use Unsloth to adapt models more affordably.

The main value of Unsloth is that it lowers the barrier to model customization. Instead of needing enterprise-scale infrastructure, developers can fine-tune models with more limited resources.

6. CrewAI

CrewAI is a framework for building multi-agent AI systems. Instead of relying on one model to complete an entire task, CrewAI allows developers to create multiple agents with different roles, goals, and responsibilities.

This approach is useful when a task requires planning, research, writing, analysis, decision-making, or tool usage. For example, one agent might gather information, another might analyze it, and another might produce a final report.

CrewAI is especially interesting for automation workflows. Businesses can use agent-based systems for market research, lead generation, content planning, sales operations, internal reporting, and project management support.

As AI applications become more advanced, many of them will look less like simple chatbots and more like coordinated systems. CrewAI helps developers organize those systems in a cleaner and more manageable way.

7. AutoGPT

AutoGPT is one of the most recognized names in the AI agent space. It became popular because it introduced many developers to the idea of autonomous agents that can break goals into steps, plan actions, and execute tasks with less manual input.

While the AI agent ecosystem has evolved significantly, AutoGPT remains important because it helped shape how developers think about goal-driven AI systems. It is useful for experimenting with autonomous workflows, task planning, and multi-step execution.

For developers in the United States who are exploring AI automation, AutoGPT can be a useful learning tool and experimentation framework. It shows how agents can be structured to pursue objectives instead of simply responding to one prompt at a time.

8. LangGraph

LangGraph is designed for developers who need more control over complex LLM workflows. While simple chains may work for basic applications, advanced AI systems often require branching logic, memory, state management, loops, and conditional execution.

LangGraph helps developers build stateful workflows where each step can depend on previous results. This is especially useful for advanced agents, long-running tasks, decision systems, and applications that need predictable control flow.

For production teams, this structure can be very important. It makes complex workflows easier to understand, debug, and maintain. In enterprise environments where reliability matters, LangGraph can help bring more discipline to LLM application design.

9. DeepEval

DeepEval is a Python framework for evaluating LLM applications. Evaluation is one of the most important parts of building reliable AI systems. A model may produce fluent answers, but that does not always mean the answers are accurate, relevant, safe, or useful.

DeepEval helps developers test LLM outputs using metrics such as relevance, faithfulness, hallucination, and task success. This is especially important for RAG systems, customer-facing AI tools, and enterprise applications where incorrect answers can create real business risks.

In the U.S. market, where companies are increasingly concerned about AI quality, compliance, and trust, evaluation tools are becoming essential. DeepEval gives developers a more structured way to test and monitor their applications before and after deployment.

10. OpenAI Python SDK

The OpenAI Python SDK is one of the simplest ways to add advanced AI capabilities to a Python application. Instead of hosting and managing models yourself, you can connect to hosted OpenAI models through an API.

This makes it easier to build chatbots, reasoning systems, content tools, coding assistants, image-aware applications, and multimodal AI products. For many developers and businesses, using an API is faster and more practical than managing model infrastructure.

The OpenAI Python SDK is especially useful when speed of development matters. Startups, agencies, enterprise teams, and solo developers can use it to prototype quickly, launch features faster, and focus on the product experience rather than the infrastructure behind the model.

Final Thoughts

Building LLM applications requires more than prompt engineering. A reliable AI application needs model access, data retrieval, serving infrastructure, workflow orchestration, fine-tuning, agent design, and evaluation. The Python ecosystem provides libraries that help developers manage each part of this process.

Transformers is ideal for working directly with open-source models. LangChain and LlamaIndex help structure applications and connect them to external data. vLLM makes model serving more efficient. Unsloth helps with affordable fine-tuning. CrewAI, AutoGPT, and LangGraph support agent-based workflows. DeepEval brings structure to testing and evaluation. The OpenAI Python SDK makes it easy to build with hosted AI models.

For developers and businesses in the United States, these libraries can help turn LLM ideas into real products. Whether the goal is to build an internal assistant, a RAG knowledge base, a customer support chatbot, a research automation tool, or a full AI-powered SaaS platform, the right Python libraries can make development faster, cleaner, and more scalable.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment